Tri-City AI Links

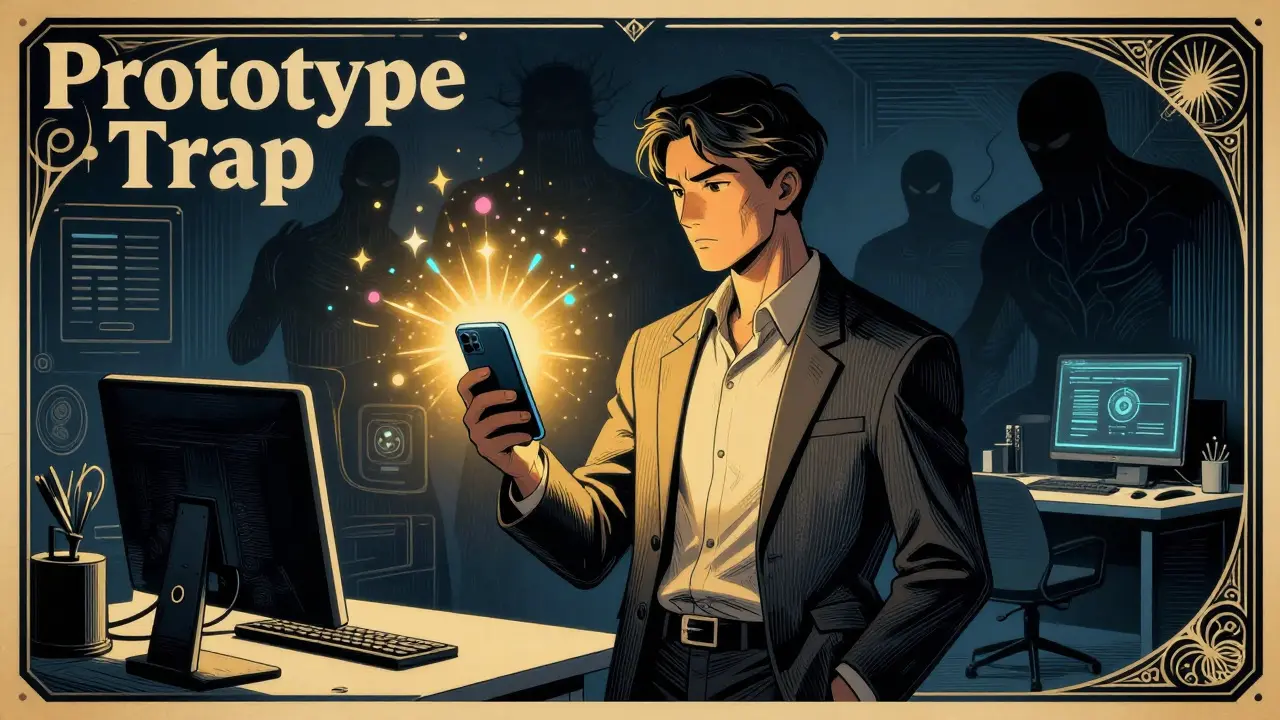

Rapid Prototyping with APIs vs Production Hardening with Open-Source LLMs

Explore the trade-offs between rapid API prototyping and production hardening with open-source LLMs. Learn cost strategies, hybrid architectures, and operational best practices.

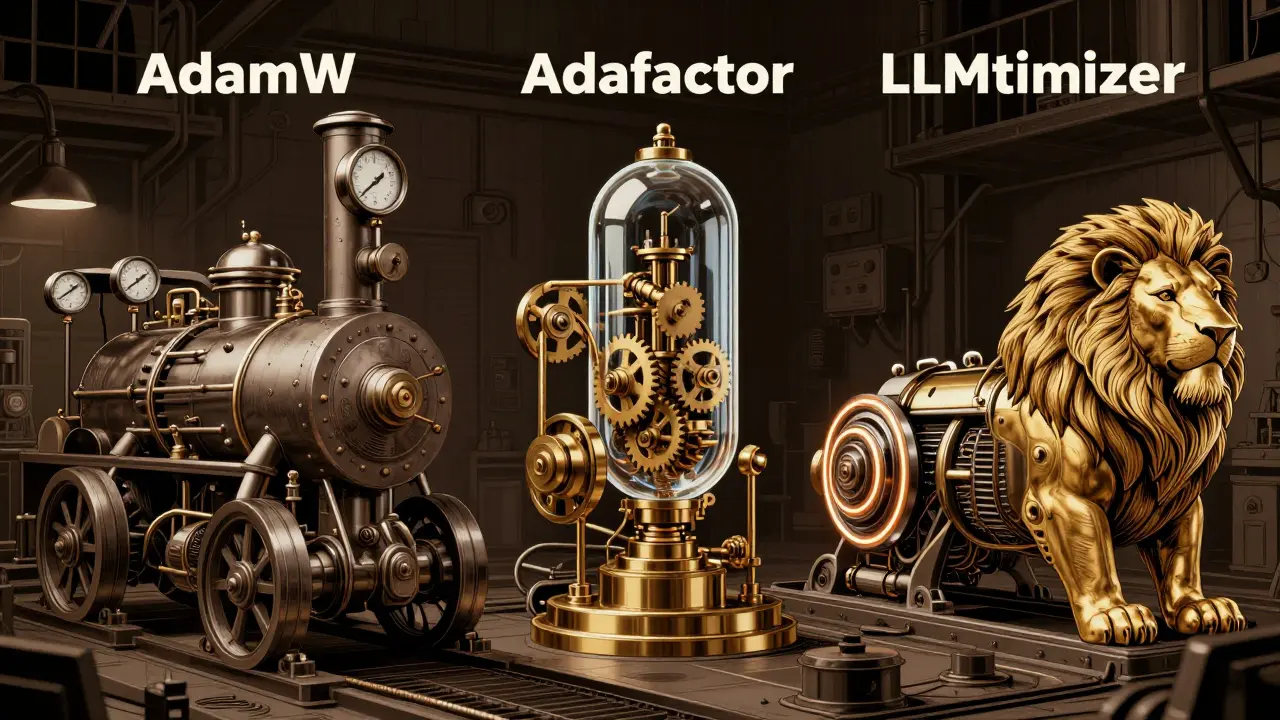

AdamW vs Adafactor vs Lion: Choosing the Right LLM Optimizer in 2026

Compare AdamW, Adafactor, and Lion optimizers for LLM training in 2026. Analyze memory usage, convergence speed, and accuracy to choose the right tool for your pipeline.

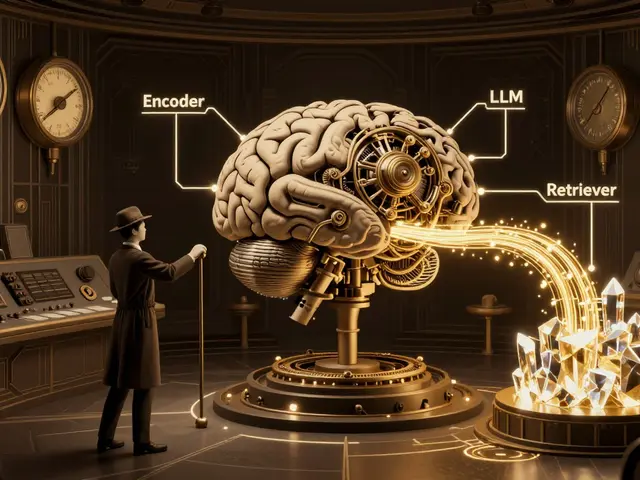

Source Selection Policies for RAG: Balancing Relevance and Diversity

Explore how balancing relevance and diversity in RAG source selection improves AI accuracy. Learn about MMR, lambda tuning, and enterprise implementation strategies.

Roles for Vibe Coding at Scale: AI Champions, Architects, and Verification Engineers

Explore the critical roles of AI Champions, Architects, and Verification Engineers needed to govern vibe coding at scale. Learn how to balance AI speed with security and structure.

Understanding Per-Token Pricing for Large Language Model APIs: A Cost Guide

Learn how per-token pricing works for LLM APIs, why output costs more, and strategies to optimize your AI budget in 2026.

Per-Token Pricing Explained: How LLM APIs Charge You in 2026

A clear guide to per-token pricing for LLM APIs. Learn how input vs output costs work, compare provider rates, and find tips to reduce your AI billing expenses.

Accessibility in Generative AI: A Guide to Inclusive Design for All Users

Learn how to build inclusive generative AI products by integrating accessibility from the start. Discover practical strategies, ethical considerations, and tools to ensure your AI serves all users effectively.

Refactoring Sprints for Vibe-Coded Apps: Scheduling and Scope

Learn how to schedule and scope refactoring sprints for vibe-coded apps. Improve security, reduce technical debt, and maintain AI-generated code with practical strategies.

Vibe Coding Ethics: Who Is Responsible When AI Code Fails?

Explore the ethical risks of vibe coding. Who is responsible when AI-generated code fails? Learn about security vulnerabilities, legal liabilities, and best practices for safe adoption.

Scientific Workflows with Large Language Models: Hypotheses and Method Summaries

Explore how Scientific Large Language Models (Sci-LLMs) transform research workflows in 2026. Learn to generate hypotheses and summarize methods safely, avoiding common pitfalls like hallucinations and protocol errors.

Regional Adoption Patterns: How Regulation Shapes Vibe Coding Usage

Explore how global regulations like the EU AI Act shape the adoption of vibe coding. Discover regional differences in AI-driven software development and learn practical strategies for compliance.

Multilingual LLMs: How Transfer Learning Bridges the Language Gap

Explore how multilingual LLMs use transfer learning to bridge the gap between high-resource languages like English and low-resource ones. We analyze performance disparities, top models like XLM-RoBERTa, and techniques like code-switching to overcome the 'curse of multilinguality.'