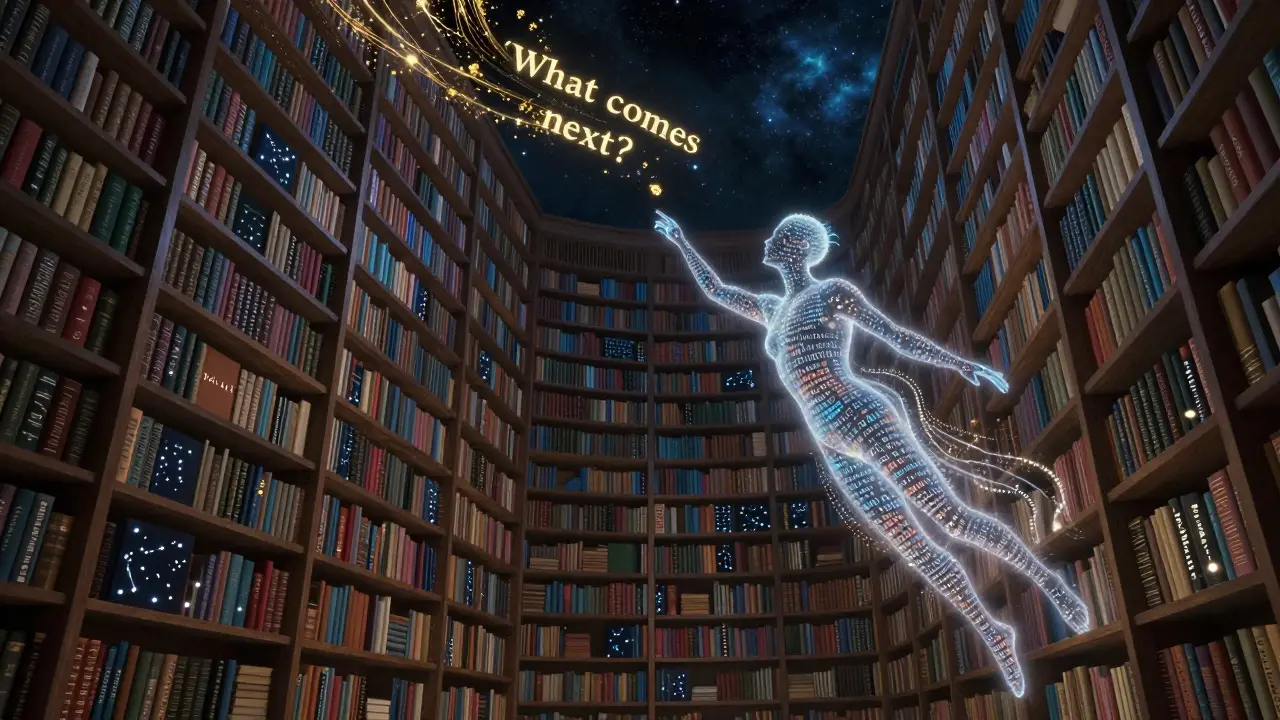

Tag: Transformer architecture

How Large Language Models Learn: Self-Supervised Training at Internet Scale

Large language models learn by predicting the next word in massive amounts of internet text. This self-supervised approach, powered by Transformer architectures, enables unprecedented scale and versatility-but comes with costs, biases, and limitations that shape how they're used today.