Vibe coding is a prompt-based development model where AI systems generate code from natural language instructions. It sounds like the future of software engineering. You describe what you want in plain English, and an AI writes the code. It’s fast. It’s intuitive. And for consumer apps or internal tools, it’s a game-changer.

But if you work in healthcare, finance, or defense, vibe coding feels less like a revolution and more like a liability. Why? Because these sectors don’t just care about whether the code works. They care about *why* it works, *who* wrote it, and *how* you can prove it’s safe.

This isn’t a technical failure. It’s a regulatory paradox. Vibe coding prioritizes speed and iteration. Regulated industries demand traceability and static documentation. These two goals are fundamentally at odds. Let’s break down why finance and healthcare are lagging behind-and what that means for the future of AI-assisted development.

The Core Conflict: Speed vs. Traceability

In traditional software development, engineers write code, document their decisions, and follow strict approval workflows. In vibe coding, you chat with an AI, get code, test it, and iterate. It’s fluid. But regulators hate fluidity.

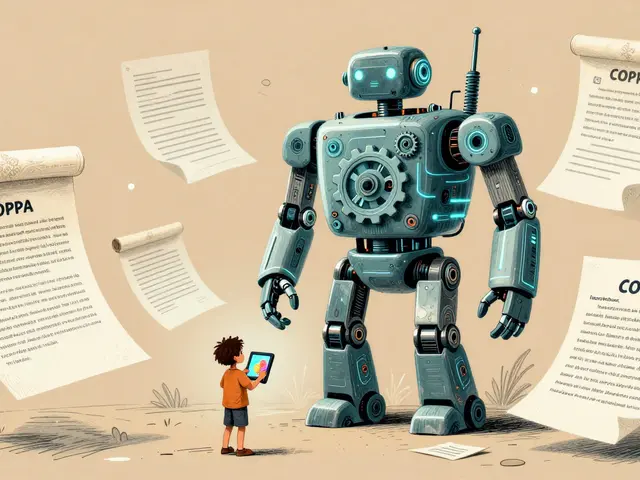

Consider HIPAA (Health Insurance Portability and Accountability Act) in healthcare. It requires complete traceability. If a patient’s data is mishandled, auditors need to know exactly which line of code caused the issue, who approved it, and what requirement it met. With vibe coding, the “author” is an AI model trained on billions of lines of code. There’s no single human decision point to pin down. That lack of provenance is a dealbreaker.

Finance faces similar hurdles. SOX (Sarbanes-Oxley Act) and PCI-DSS (Payment Card Industry Data Security Standard) mandate comprehensive audit trails for every system change. If an AI generates a transaction processing module, how do you prove it doesn’t contain hidden vulnerabilities or biased logic? You can’t just say, “The AI did it.” Regulators require human accountability.

This isn’t about fear of technology. It’s about legal liability. When lives or millions of dollars are on the line, “I asked an AI to write this” isn’t a defensible position in court.

Regulatory Frameworks That Block AI-Assisted Development

Let’s look at the specific rules that make vibe coding difficult in regulated sectors:

- FDA 21 CFR Part 11: Requires electronic records to be trustworthy and reliable. Every change must be logged with rationale. Vibe coding’s iterative nature makes each version a potential new product requiring separate validation.

- ISO/IEC 62304: A medical device software standard demanding rigorous lifecycle management. AI-generated code lacks the structured design documents this standard requires.

- NIST 800-53: Used in defense and government IT. It prohibits unknown components. Since AI models are “black boxes,” their output is inherently unverified.

- GDRP: General Data Protection Regulation in Europe. It demands transparency in automated decision-making. If an AI codes a feature that influences user data handling, you must explain how it works. Vibe coding rarely produces that explanation automatically.

These frameworks were built for waterfall development-slow, deliberate, and documented. Vibe coding is agile, experimental, and opaque. Trying to force one into the other creates friction that slows adoption significantly.

| Regulatory Requirement | Vibe Coding Reality | Compliance Risk |

|---|---|---|

| Traceable Code Origin | AI-generated, multi-source training data | High: Cannot attribute authorship |

| Static Documentation | Dynamic, iterative prompts | Medium: Documentation lags behind code changes |

| Human Approval Gates | Rapid auto-generation | High: Bypasses formal review steps |

| Audit Trails | Minimal inherent logging | Critical: Fails SOX/HIPAA audits |

Where Vibe Coding Actually Works in Regulated Sectors

It’s not all bad news. Vibe coding has found safe harbors in regulated industries. The key is scope. Use it where risk is low, and compliance overhead is manageable.

1. Rapid Prototyping with Fake Data In healthcare, developers use vibe coding to build mockups of electronic medical record (EMR) interfaces. They use synthetic patient data-no real PHI (Protected Health Information). Once the prototype proves useful, human engineers rebuild it using compliant, validated code. This shifts effort from low-value UI work to high-risk backend logic.

2. Internal Tools and Analytics Back-office scripts, ETL pipelines, and admin dashboards often fall outside strict regulatory scrutiny. A bank might use vibe coding to generate a JSON validator for internal reports. As long as it doesn’t touch customer transactions or public-facing systems, the compliance team stays happy.

3. Regulatory Readiness Dashboards In superannuation and insurance, vibe coding helps build tools that highlight compliance gaps early. These aren’t production systems; they’re aids for human experts. The AI surfaces risks; humans make decisions.

The rule of thumb? Segregate strictly. Keep vibe-coded prototypes far away from production systems handling sensitive data.

Governance Frameworks for Safer Adoption

To bridge the gap, companies are building governance structures around AI coding. One popular approach is the V.E.R.I.F.Y. checklist:

- Validate: Does the code meet functional requirements?

- Enforce: Does it follow company coding standards?

- Review: Has a qualified engineer inspected it?

- Inspect: Are there security vulnerabilities?

- Format: Is documentation created for audit trails?

- Yield: Are all compliance artifacts generated?

Beyond checklists, organizations form AI governance task forces. These teams include engineers, legal counsel, and compliance officers. They define which AI tools are approved, what data can be used in prompts (never PII or PHI), and who owns the resulting code.

Technical controls also help. Static application security testing (SAST), software bill of materials (SBOM) generation, and secret scanning become mandatory. Tools like Snyk or FOSSA scan AI-generated code for license issues and dependencies before it enters the main branch.

Regulatory Evolution: Sandboxes and PreCert Programs

Regulators are starting to adapt. The FDA’s Digital Health Software Precertification (PreCert) program is a major step. Instead of reviewing each product individually, it evaluates the organization’s quality management system. If a company proves it has strong safety practices, it can deploy vibe-coded tools faster, with continuous monitoring instead of upfront approval.

For example, a patient adherence app could launch quickly, collecting real-world evidence. Regulators then review updates continuously. This shifts compliance from a gatekeeping process to a partnership.

Regulatory sandboxes offer another path. Developers test vibe-coded tools under regulator supervision. Risks are assessed in real-time without full compliance burdens. The FDA, European Medicines Agency (EMA), and Japan’s PMDA are coordinating these efforts globally.

However, as of 2026, these programs are still pilots. Most firms rely on traditional compliance models. Change is coming, but slowly.

The Competitive Gap: Why This Matters

Here’s the uncomfortable truth: unregulated sectors are moving faster. Consumer tech companies, SaaS platforms, and enterprise software firms have integrated vibe coding into their workflows. They ship features quicker, reduce engineering costs, and attract talent who want to use modern tools.

Regulated sectors are falling behind. Banks and hospitals take longer to market competitive features. Their engineers face higher friction. Top talent may leave for companies with fewer restrictions. This productivity gap will widen through 2027-2028 as AI tools mature.

Some analysts believe this pressure will force regulatory modernization. Others think regulated industries will remain bifurcated: vibe coding for prototyping and internal tools, traditional methods for production. Either way, the divergence is already here.

Hybrid Models: The Near-Term Solution

The most practical path forward is hybrid development. Combine vibe coding’s speed with human-in-the-loop validation. Embed clinicians, compliance specialists, and security experts directly into the workflow. They validate AI outputs for safety and requirement convergence.

Staggered rollouts help too. Deploy tools in pilot facilities first. Collect real-world evidence. Prove safety before scaling. It’s slower than pure vibe coding, but it meets regulatory needs.

Long-term, risk-based regulation is the goal. Evaluate tools by impact, not blanket rules. Support continuous learning systems backed by post-deployment evidence. But we’re years away from that reality.

Can vibe coding ever be fully compliant with HIPAA or SOX?

Not in its current form. HIPAA and SOX require human accountability and traceable documentation. Vibe coding lacks inherent audit trails. Full compliance would require significant changes to both AI tooling and regulatory frameworks, likely taking until 2029-2031.

What is the safest way to use vibe coding in healthcare?

Use it only for non-production prototypes with synthetic data. Never expose real patient information. Rebuild validated components manually before deployment. Keep AI-generated code segregated from clinical systems.

How does the FDA PreCert program help vibe coding adoption?

PreCert evaluates organizations rather than individual products. Companies with strong quality systems can deploy vibe-coded tools faster, relying on continuous monitoring instead of pre-approval. This reduces time-to-market while maintaining safety oversight.

Why do financial institutions resist vibe coding?

Financial regulations like SOX and PCI-DSS demand strict audit trails and human approval gates. Vibe coding’s rapid, opaque generation process conflicts with these requirements. Banks prioritize risk mitigation over speed, leading to cautious adoption.

Will vibe coding replace traditional development in regulated sectors?

Unlikely in the near term. A bifurcated model is expected: vibe coding for prototyping and internal tools, traditional methods for production systems. Full integration depends on regulatory evolution and proven safety records, possibly beyond 2030.