Tag: prompt engineering

Chain-of-Thought in Vibe Coding: Why Explanations Beat Code First

Learn how Chain-of-Thought prompting transforms vibe coding by forcing AI to explain reasoning before writing code, reducing bugs and improving reliability.

Critique-and-Revise Prompting: How to Build Iterative Refinement Loops for AI

Master critique-and-revise prompting to turn AI drafts into polished, professional outputs using iterative refinement loops and self-correction techniques.

Long-Context Prompt Design: How to Position Information for LLM Attention

Learn how to optimize LLM performance by mastering long-context prompt design. Discover the "Lost in the Middle" phenomenon and strategies to position critical info for maximum attention.

Vibe Coding vs AI Pair Programming: Choosing the Right AI Workflow

Discover the difference between Vibe Coding and AI Pair Programming. Learn when to prioritize speed with vibe coding and when to ensure quality with AI pair programming.

Debugging Prompts: Systematic Methods to Improve LLM Outputs

Learn systematic methods to debug and improve LLM outputs, from task decomposition and RAG to advanced mathematical steering and prompt chaining.

Stop Sequences in Large Language Models: Preventing Runaway Generations

Stop sequences are a simple but powerful tool to prevent AI models from overgenerating text. They improve accuracy, cut costs, and ensure clean outputs - essential for any real-world AI application.

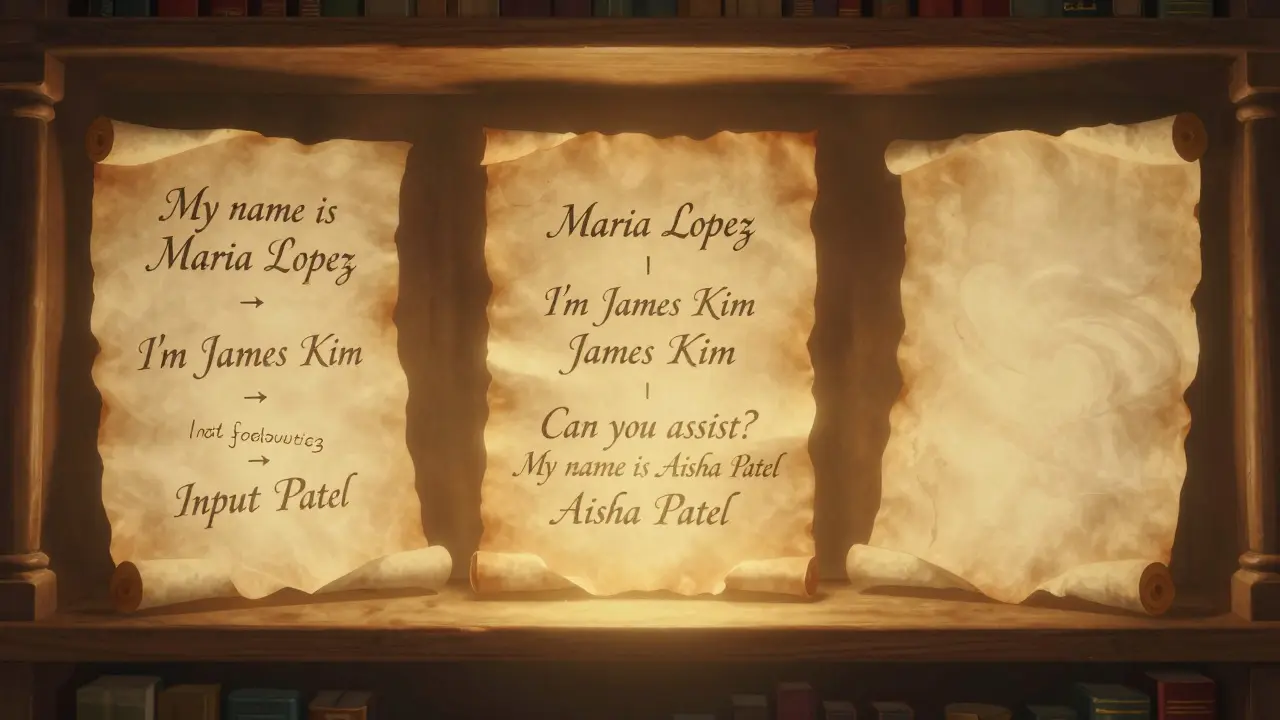

Few-Shot Prompting Strategies That Boost LLM Accuracy and Consistency

Few-shot prompting boosts LLM accuracy by 15-40% using just 2-8 examples. Learn how to choose the right examples, avoid over-prompting, and combine it with chain-of-thought for better results - without fine-tuning.

In-Context Learning Explained: How LLMs Learn from Prompts Without Training

In-Context Learning allows LLMs to adapt to new tasks using examples in prompts-no retraining needed. Discover how it works, its benefits, limitations, and real-world applications in AI today.

Few-Shot vs Fine-Tuned Generative AI: How Product Teams Should Choose

Product teams need to choose between few-shot learning and fine-tuning for generative AI. This guide breaks down when to use each based on data, cost, complexity, and speed - with real-world examples and clear decision criteria.

Prompt Hygiene for Factual Tasks: How to Write Clear LLM Instructions That Don’t Lie

Learn how to write precise LLM instructions that prevent hallucinations, block attacks, and ensure factual accuracy. Prompt hygiene isn’t optional - it’s the foundation of reliable AI in high-stakes fields.

NLP Pipelines vs End-to-End LLMs: When to Use Each for Real-World Applications

Learn when to use traditional NLP pipelines versus end-to-end LLMs for real-world applications. Discover cost, speed, and accuracy trade-offs - and why hybrid systems are becoming the industry standard.