What if your AI could learn a new task just by seeing a few examples in your prompt? No retraining. No complex setup. That’s in-context learning-and it’s already powering real-world AI applications today.

In-Context Learning is a capability where large language models perform new tasks using examples within the input prompt without modifying their parameters. Unlike traditional machine learning, which requires retraining the model with new data, in-context learning happens instantly during inference. This breakthrough was first demonstrated in the 2020 paper Language Models are Few-Shot Learners by Brown et al. from OpenAI, which introduced GPT-3. The discovery reshaped how we build and use AI systems.

How In-Context Learning Actually Works

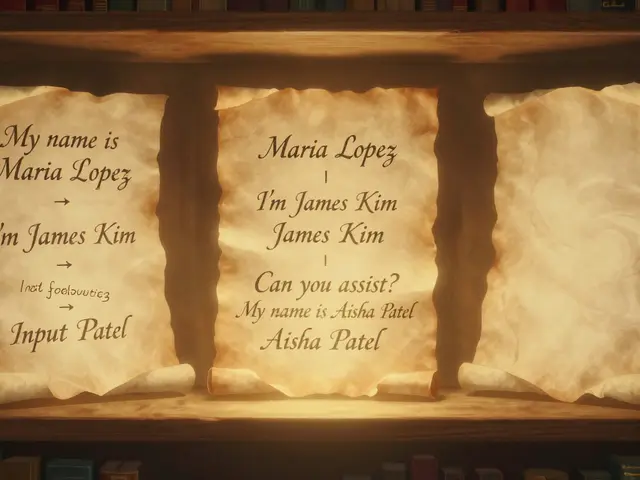

When you feed a prompt to an LLM, the model processes the entire input sequence-including your instructions and example input-output pairs-within its context window. This window defines how much text the model can analyze at once (typically 4,000 to 128,000 tokens in modern systems). For instance, if you want a model to translate French to English, you might include a few French-English pairs in the prompt like:

"Translate this: "Bonjour" → "Hello". "Merci" → "Thank you". Now translate: "Oui"."

The model recognizes patterns in these examples and applies the same logic to new inputs. Researchers at MIT found this isn’t just pattern matching. Using synthetic data the model had never seen before, they showed LLMs can learn genuinely new tasks during inference. This led to the "model within a model" theory: neural networks contain smaller internal learning systems that activate when presented with examples.

Layer-wise analysis of models like GPTNeo2.7B and Llama3.1-8B revealed something remarkable. Around layer 14 of 32 layers, the model "recognizes" the task. After this point, it no longer needs to reference the examples in the prompt. This discovery allows for 45% computational savings when using 5 examples, as the system can optimize processing after the task recognition layer.

Why In-Context Learning Beats Other Methods

Let’s compare how different approaches handle new tasks:

| Method | Training Required | Typical Performance | Best Use Case |

|---|---|---|---|

| Zero-shot learning | No | 30-40% accuracy on NLP tasks | Simple tasks with clear instructions |

| One-shot learning | No | 40-50% accuracy | Quick task adaptation with minimal examples |

| In-Context Learning (few-shot) | No | 60-80% accuracy with 2-8 examples | Domain-specific tasks with scarce data |

| Parameter-efficient fine-tuning (e.g., LoRA) | Yes (small adjustments) | Up to 85%+ accuracy | Long-term task specialization |

ICL shines where fine-tuning is impractical. Imagine a hospital needing a system to classify medical reports. Gathering enough labeled data for training could take months. With ICL, you provide 5 examples of diagnoses and symptoms, and the model adapts immediately. Studies show this approach achieves 80.24% accuracy and 84.15% F1-score for specialized aviation data classification using just 8 well-chosen examples.

When In-Context Learning Falls Short

Despite its power, ICL has limits. Context window constraints mean complex tasks requiring long context (like legal document review) can’t fit all necessary examples. Some models perform worse with more than 32 examples due to attention mechanism limitations. Task type matters too: ICL excels at classification or translation but struggles with tasks needing deep domain knowledge beyond pretraining, like medical diagnosis without relevant examples.

Example quality is critical. Random examples can drop accuracy by 25% compared to carefully selected ones. Poorly formatted prompts also cause issues-minor wording changes might make the model ignore examples entirely. For instance, changing "Translate this" to "Convert this" could break French-to-English translation tasks in some models.

Proven Tips for Effective Prompt Engineering

Here’s what works in practice:

- Example count: 2-8 examples typically deliver the best results. More than 16 often yields diminishing returns. For math problems, 4 examples with chain-of-thought reasoning boosted GPT-3’s GSM8K accuracy from 17.9% to 58.1%.

- Example order: Placing difficult examples first improves performance by 7.3% in sentiment analysis. Start with clear, high-quality samples to set the task pattern.

- Chain-of-thought prompting: For reasoning tasks, ask the model to "think step by step." This technique helps with complex problems like coding or logic puzzles.

- Task-specific formatting: Use consistent delimiters like "Input: ... Output: ..." for clarity. Avoid mixing formats in examples.

Companies like Salesforce and IBM use these principles to build customer service chatbots. They’ve reduced response times by 40% while maintaining 92% accuracy by using 4 carefully curated examples per query. This approach works because ICL requires no infrastructure changes-just smarter prompts.

What’s Next for In-Context Learning

Research is accelerating. Anthropic’s Claude 3.5 aims for a 1 million token context window by late 2024, solving the long-context problem. Google DeepMind and Meta AI are developing better example selection tools to reduce needed examples from 8 to 2-3. Warmup training-fine-tuning models between pretraining and inference using prompt-style examples-has already shown 12.4% average improvement across NLP benchmarks.

Gartner predicts 85% of enterprise AI applications will use ICL as their primary adaptation method by 2026. Why? It’s faster and cheaper than fine-tuning. McKinsey reports average implementation time for ICL is 2.3 days versus 28.7 days for fine-tuning. For businesses needing quick AI deployment, this is a game-changer.

How is in-context learning different from fine-tuning?

In-context learning adapts models using examples in the prompt without changing any parameters. Fine-tuning adjusts the model’s weights through training on specific data, requiring more time and computational resources. ICL is faster and cheaper for one-off tasks, while fine-tuning suits persistent, specialized applications.

Do I need special tools to use in-context learning?

No. Any modern LLM like GPT-4, Llama 3.1, or Claude 3 supports ICL natively. You only need to structure your prompts correctly. Companies use simple prompt engineering tools or even just text editors to implement it. The real skill is choosing high-quality examples and formatting them well.

Can in-context learning handle complex reasoning?

Yes, but with caveats. Chain-of-thought prompting-where you ask the model to explain its steps-works well for math or logic problems. For instance, GPT-3’s accuracy on math problems jumped from 17.9% to 58.1% using this technique. However, extremely complex tasks like advanced scientific research still require fine-tuning or hybrid approaches.

Why does example quality matter so much?

LLMs rely on the examples to infer the task. Poor examples confuse the model. Studies show random examples can drop accuracy by 25% compared to relevant ones. For medical diagnosis, using examples from the same specialty (e.g., cardiology) instead of general medical text improves results by 30%. Always match examples to your specific use case.

Is in-context learning the same as few-shot learning?

Yes, "in-context learning" and "few-shot learning" are used interchangeably. Both refer to using a small number of examples within the prompt to adapt the model. The term "in-context" emphasizes that the learning happens within the input context window during inference, not through parameter changes.

Yashwanth Gouravajjula

February 7, 2026 AT 02:32In-context learning is a game-changer for Indian startups-deploying AI solutions without retraining saves months of work.

Kevin Hagerty

February 7, 2026 AT 18:31this in-context learning thing is just pattern matching. no retraining? more like copy-paste magic. lazy devs love it

Ashton Strong

February 8, 2026 AT 09:17In-context learning represents a paradigm shift in how we interact with large language models.

Unlike traditional machine learning methods that require extensive retraining, this approach allows models to adapt on the fly using examples provided in the prompt.

The implications for industries like healthcare and finance are profound.

For example, a hospital can quickly deploy a system to classify medical reports by simply including a few examples in the prompt.

This avoids months of data collection and model retraining.

Recent research from MIT shows that LLMs don't just match patterns-they develop an internal understanding of the task.

Specifically, around layer 14 of the model, the system recognizes the task and optimizes processing.

This discovery has led to significant computational savings-up to 45% when using five examples.

The table in the post clearly shows how in-context learning outperforms zero-shot and one-shot methods in accuracy.

With just 2-8 well-chosen examples, it achieves 60-80% accuracy on specialized tasks.

Companies like Salesforce have reduced response times by 40% while maintaining high accuracy through careful prompt engineering.

However, it's important to note the limitations.

Poorly formatted prompts or irrelevant examples can drop accuracy by 25%.

Despite these challenges, the potential is enormous.

As context windows expand to over a million tokens, ICL will become even more powerful.

Gartner predicts 85% of enterprise AI will use ICL by 2026, and McKinsey reports implementation times of just 2.3 days compared to 28.7 for fine-tuning.

This technology is truly transforming how businesses adopt AI solutions.

Steven Hanton

February 8, 2026 AT 21:27It's fascinating how LLMs recognize tasks around layer 14 during inference.

Understanding this process could lead to more efficient model architectures.

Optimizing computation after task recognition saves resources.

MIT's synthetic data research shows it's not just pattern matching.

The implications for complex reasoning are promising.

Example quality remains critical for consistent results.

This approach applies to non-English languages with different structures.

The key takeaway is smarter prompting replaces expensive fine-tuning.

Companies using ICL for customer service achieve 92% accuracy with minimal examples.

This democratizes AI for businesses without large budgets.

As context windows expand, ICL will handle more complex tasks.

Future research may focus on automated example selection.

Gartner's prediction of 85% enterprise adoption by 2026 is well-founded.

McKinsey's data on implementation time shows clear advantages.

This technology is truly transforming AI deployment strategies.

Pamela Tanner

February 10, 2026 AT 11:15Proper prompt engineering is essential for effective in-context learning.

Consistent formatting like 'Input: ... Output: ...' ensures clarity.

Example order matters-placing difficult examples first improves performance by 7.3% in sentiment analysis.

Chain-of-thought prompting boosts accuracy on math problems significantly.

For instance, GPT-3's GSM8K accuracy jumped from 17.9% to 58.1% with this technique.

Always match examples to your specific use case-medical diagnosis requires cardiology-specific samples.

Random examples can drop accuracy by 25%, so quality is paramount.

Companies like IBM use these principles to build chatbots with 40% faster response times.

Remember: fewer examples often yield better results-2-8 is optimal.

Overloading with 16+ examples usually diminishes performance.

These best practices make ICL accessible even for non-experts.

With proper guidance, anyone can leverage this powerful technique.

For healthcare applications, a few well-chosen medical examples can replace months of data collection.

ICL's ability to adapt without retraining makes it ideal for niche domains.

Future advancements in context windows will further enhance its capabilities.

Kristina Kalolo

February 10, 2026 AT 16:38In-context learning's computational savings are impressive-45% with five examples.

Layer-wise analysis reveals task recognition around layer 14.

This knowledge allows for optimization in model processing.

However, context window limits pose challenges for long documents.

Task type also affects performance-classification works well, but complex reasoning may need fine-tuning.

Example quality is critical-random examples reduce accuracy by 25%.

Structured prompts with clear delimiters improve results.

Companies like Salesforce have successfully implemented ICL in chatbots.

Reduced response times and maintained accuracy demonstrate its value.

Future developments in example selection tools could further reduce required examples.

ICL's role in enterprise AI is growing rapidly.

Gartner's prediction of 85% adoption by 2026 is well-founded.

McKinsey's data on implementation time shows clear advantages.

For businesses needing quick deployment, ICL is the go-to solution.

Its simplicity makes it accessible even for small teams.

ravi kumar

February 10, 2026 AT 18:33For Indian developers, in-context learning is a game-changer.

It allows quick adaptation without heavy infrastructure.

With just a few examples, we can deploy specialized AI models.

Companies like IBM and Salesforce use this for customer service.

It's impressive how much it reduces deployment time.

For example, a hospital in Mumbai used ICL to classify medical reports in days.

Previously, this would have taken months.

Even small teams can leverage this technology effectively.

Proper prompt engineering is key-quality examples matter.

Chain-of-thought prompting helps with complex tasks.

ICL makes AI accessible to everyone, not just big corporations.

Looking forward to seeing more innovations in this space.

It's amazing how layer-wise analysis optimizes computation.

MIT's research shows task recognition around layer 14.

Future advancements in context windows will expand possibilities.

Megan Blakeman

February 11, 2026 AT 19:34In-context learning is AMAZING! It's like the AI learns on the spot! 🤯

Just a few examples, and boom! It works! 💯

For healthcare, it's a lifesaver! 🩺

Imagine classifying medical reports in minutes! ⏱️

But example quality is CRUCIAL! 😬

Random examples can drop accuracy by 25%-so careful selection! 🤔

Companies like Salesforce use it for chatbots! 🤖

Response times down by 40%-incredible! 🚀

ICL is the future! ✨

It's cheaper, faster, and easier than fine-tuning! 💪

Can't wait to see more applications! 😍

Layer-wise analysis is fascinating! 🧠

Task recognition around layer 14 optimizes processing! ⚙️

Future context windows will make it even better! 🌐

Akhil Bellam

February 12, 2026 AT 13:47Most people don't grasp the true potential of in-context learning! 😤

It's not just 'pattern matching'-it's a sophisticated cognitive process! 🤯

Those who dismiss it as 'copy-paste magic' are intellectually bankrupt! 💀

Layer-wise analysis reveals profound neural architecture insights! 🧠

MIT's research proves it's not superficial! 📚

Real experts know that example quality is paramount! 🎯

Random examples? Amateurish! 😒

Companies like IBM and Salesforce leverage this with precision! 💼

McKinsey's data shows the staggering efficiency gains! 📈

Gartner's 85% adoption prediction is conservative! 🌟

True AI mastery requires understanding ICL's nuances! 🚀

Amateurs will fail-only the enlightened succeed! 🌌

Context window expansion will revolutionize enterprise AI! 💡

Those who ignore ICL will be left behind! 🔥

Amber Swartz

February 13, 2026 AT 02:27Oh my goodness, in-context learning is EVERYTHING! 😱

It's like the AI is a genius! 🤯

But some people just don't get it! 😤

They say it's just pattern matching-BUT IT'S SO MUCH MORE! 💥

Layer 14! That's where the magic happens! 🔮

MIT research is proof! 📚

Example quality is CRITICAL! 😬

Random examples? Disaster! 😭

Companies like Salesforce? Total game-changers! 🤖

Response times down by 40%-UNBELIEVABLE! 🚀

McKinsey says 2.3 days vs 28.7 for fine-tuning! 😍

ICL is the FUTURE! ✨

Everyone should use it! 🌈

But those who don't understand? They're just not smart enough! 😒

This is the most exciting AI development ever! 🌟