When you ask a large language model a question without any examples, it’s called zero-shot prompting. Sometimes it works. Often, it doesn’t. You get vague answers, wrong formats, or responses that miss the point entirely. But when you give the model just two or three clear examples of what you want - few-shot prompting - accuracy jumps by 15% to 40%. That’s not a small win. It’s the difference between a usable result and one that needs hours of editing.

Why does this work? Because LLMs like GPT-4, Claude, and LLaMA aren’t just guessing. They’re pattern matchers. Trained on billions of text samples, they’re built to recognize structure, tone, and logic. Give them a few real-world examples, and they’ll copy the pattern - not memorize it, but adapt it. No training. No reprogramming. Just context.

How Few-Shot Prompting Actually Works

Few-shot prompting doesn’t change the model’s weights. It doesn’t require GPUs or data pipelines. All it does is slip a few input-output pairs into the prompt before your actual question. Think of it like showing a student two solved math problems before asking them to solve a third.

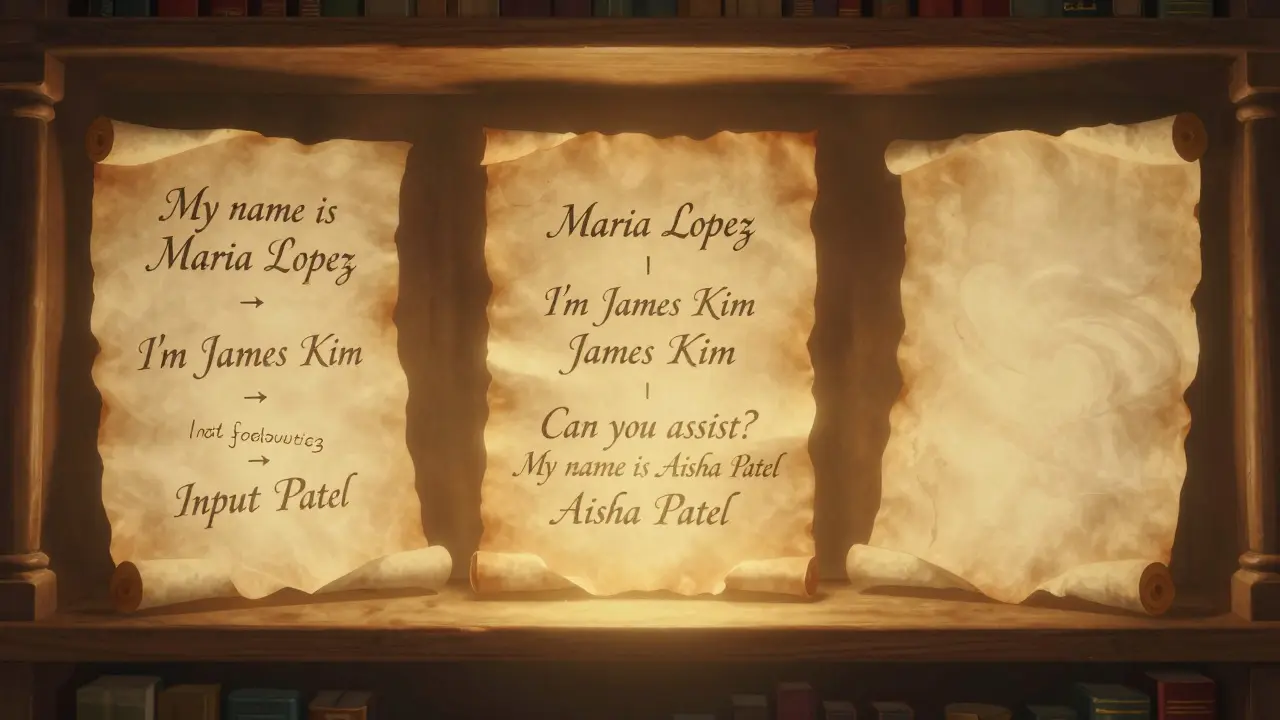

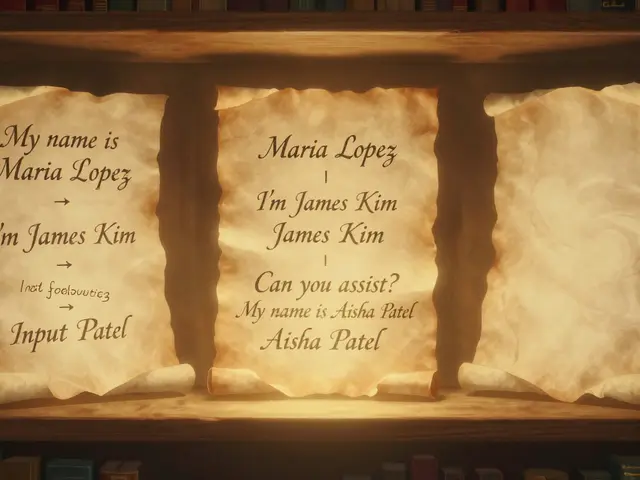

For example, if you want the model to extract names from customer emails, you might write:

- Input: "Hi, my name is Maria Lopez and I need help with my order #7892." Output: Maria Lopez

- Input: "Hello, I’m James Kim from Seattle. My account is inactive." Output: James Kim

- Input: "Can you assist? My name is Aisha Patel." Output: Aisha Patel

Then you add: "Extract the name from this email: \"Hi, I’m Tom Rivera and I’m having trouble logging in.\""

The model sees the pattern: find the first full name after "my name is" or "I’m". It doesn’t need to be told explicitly. It learns from context. That’s in-context learning - and it’s why few-shot prompting works so well.

The Hidden Trap: Too Many Examples

It’s tempting to throw in five, ten, even twenty examples. "More is better," you think. But research shows that’s not true. In fact, adding too many examples can make performance worse.

This is called the few-shot dilemma. Studies on GPT-4o, DeepSeek-V3, and LLaMA-3.2 found that performance peaks around 4-6 examples, then drops sharply after 8. Why? Because the model starts overfitting. It begins treating the examples like rigid rules instead of flexible patterns. It gets confused when the real input doesn’t match exactly.

Imagine teaching someone to drive by showing them 15 videos of cars turning left on wet roads. Then you ask them to turn right on dry pavement. They might freeze - because all their training was about one scenario.

The fix? Less is more. Stick to 2-5 high-quality examples. Quality beats quantity every time.

Selecting the Right Examples

Not all examples are created equal. Random examples? They often backfire. Biased examples? They lead to skewed outputs. You need examples that represent the full range of what you’ll encounter.

For a sentiment analysis task, don’t just use happy and sad examples. Include:

- Sarcastic: "Oh great, another meeting at 8 a.m."

- Neutral: "The package arrived on Tuesday."

- Confused: "I’m not sure if this is a refund or a charge."

- Angry: "This is the third time this has happened."

These variations help the model generalize. They teach it how to handle edge cases - not just the obvious ones.

Research shows that using TF-IDF to select examples improves performance over random selection or semantic embedding. TF-IDF finds examples that are most relevant to your task by analyzing word importance - not just similarity. It filters out noise and picks the ones that matter most. In one study, TF-IDF-selected examples improved classification accuracy by 1% over state-of-the-art methods - a small number, but meaningful when every percentage counts.

Chain-of-Thought: The Secret Weapon

Few-shot prompting gets better when you add chain-of-thought. Instead of just showing input → output, show the reasoning steps in between.

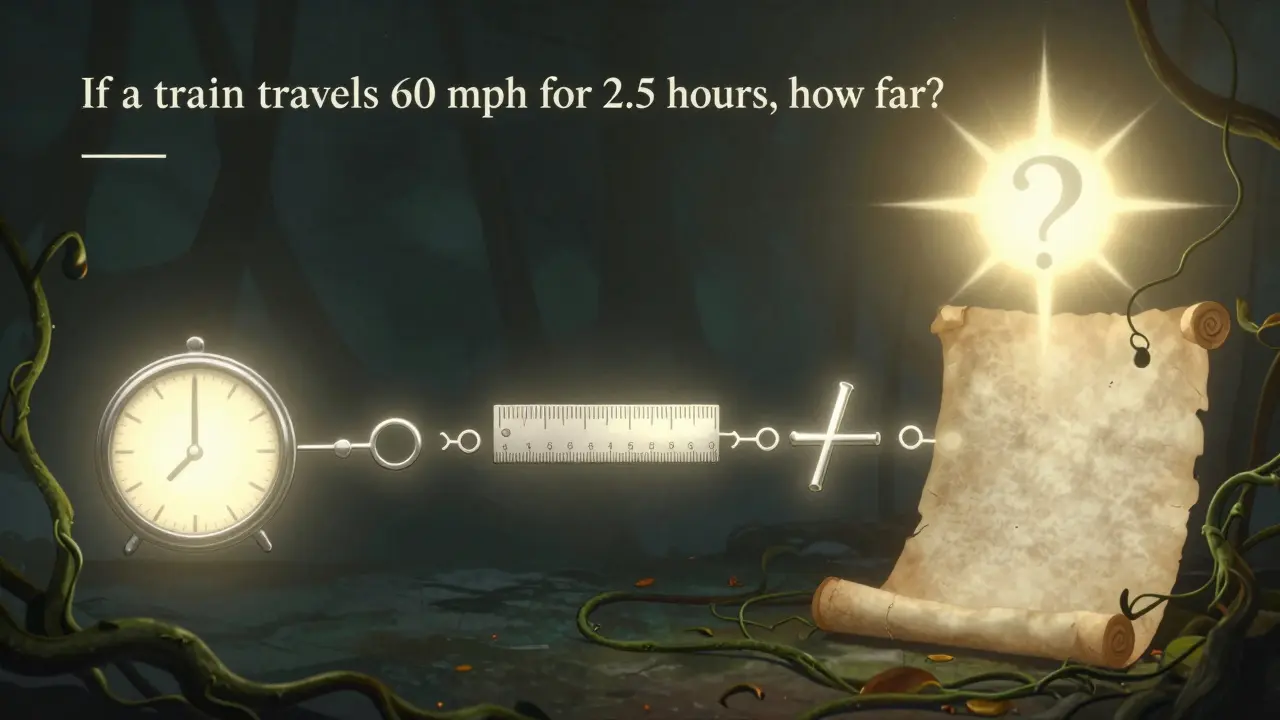

For math problems:

- Input: "If a train travels 60 mph for 2.5 hours, how far does it go?" Output: "Distance = speed × time. 60 × 2.5 = 150. So the train travels 150 miles."

For customer support:

- Input: "I ordered a blue shirt but got a red one." Output: "First, confirm the order number. Then check the shipping details for color mismatch. Next, apologize and offer a return label. Finally, ensure the replacement ships within 24 hours."

This teaches the model how to think - not just what to say. When combined with few-shot examples, chain-of-thought boosts accuracy on complex tasks by up to 30%. It’s especially powerful for logic puzzles, legal reasoning, or multi-step instructions.

When to Use Few-Shot vs. Fine-Tuning vs. RAG

You don’t always need few-shot prompting. Sometimes, another approach is better.

| Scenario | Few-Shot Prompting | Fine-Tuning | RAG |

|---|---|---|---|

| Task complexity | Medium - needs context or formatting | High - requires deep pattern learning | High - needs real-time data |

| Data available | 2-5 examples | Thousands of labeled examples | Large knowledge base |

| Speed needed | Instant | Days to weeks | Seconds (with indexing) |

| Cost | Low (no training) | High (compute + data) | Medium (embedding + retrieval) |

| Best for | Formatting, task adaptation, quick tests | High-volume, single-task systems | Dynamic info like stock prices, news, support docs |

Few-shot prompting shines when you need quick, low-cost improvements. Fine-tuning is better if you’re running the same task 10,000 times a day. RAG wins when your answers must pull from live data - like customer records or real-time inventory.

Best Practices You Can’t Ignore

- Start simple, then add complexity. Order examples from easy to hard. This helps the model build understanding step by step.

- Test on real inputs. Don’t just trust your first result. Try 10-20 new prompts. If accuracy drops, your examples are too narrow.

- Avoid repetition. Don’t give five examples that are almost identical. Variety teaches generalization.

- Use clear formatting. Label inputs and outputs clearly. Use "Input:" and "Output:". Consistency helps the model parse structure.

- Don’t over-prompt. If you’re using 8+ examples, you’re probably hurting performance. Cut back.

One team at a logistics startup tried 12 examples to classify delivery delays. Accuracy was 68%. They cut it to 4 well-chosen examples - accuracy jumped to 89%. The model wasn’t confused by too much data. It was clearer.

Final Thought: It’s Not Magic. It’s Design.

Few-shot prompting isn’t about tricking the model. It’s about designing the right context. Think of it like writing instructions for a new employee. You wouldn’t hand them a 50-page manual. You’d show them three real cases, explain the pattern, and let them try.

That’s all this is. A smarter way to communicate with machines. No training needed. No infrastructure. Just better prompts.

Start with two examples. Test. Adjust. Repeat. You’ll be surprised how much better your results get - without spending a dime on compute.

What’s the difference between zero-shot and few-shot prompting?

Zero-shot prompting gives the model no examples - just the task. Few-shot prompting gives it 2-8 examples of what you want. Few-shot typically improves accuracy by 15-40% because it shows the model the pattern instead of just asking it to guess.

Can I use few-shot prompting with any LLM?

Yes. It works with GPT-4, Claude, LLaMA, Mistral, Gemini, and others. But performance varies. Some models handle 5 examples well; others peak at 3. Test with your specific model to find the sweet spot.

How many examples should I use?

Start with 2-4. For simple tasks, that’s enough. For complex reasoning or edge cases, try 5-6. Avoid going beyond 8 - more examples often hurt performance due to over-prompting. Use TF-IDF to pick the most relevant ones.

Does the order of examples matter?

Yes. Put simple examples first, then build up to harder ones. This helps the model learn progressively. Random order can confuse it. A clear progression improves generalization.

Why does chain-of-thought help with few-shot prompting?

Chain-of-thought shows the reasoning steps before the final answer. It trains the model to think through problems, not just output answers. This is especially useful for math, logic, or multi-step tasks. Combined with few-shot examples, it can boost accuracy by 20-30% on hard problems.

Is few-shot prompting better than fine-tuning?

It depends. Few-shot is faster, cheaper, and works with minimal data. Fine-tuning gives higher accuracy but needs thousands of labeled examples and weeks of training. Use few-shot for quick wins. Use fine-tuning when you’re running the same task at scale.

Eka Prabha

February 27, 2026 AT 10:37Let me get this straight - we’re paying billions to train models, then asking them to ‘learn from context’ like a toddler who just saw two cartoons? This isn’t ‘in-context learning’ - it’s a band-aid on a leaking dam. The model isn’t ‘pattern-matching’ - it’s hallucinating based on statistical noise. And TF-IDF? Please. You’re telling me a 1970s IR technique outperforms transformer-based embeddings? That’s like using a slide rule to navigate a nuclear submarine. They’re not ‘improving accuracy’ - they’re just lucky the examples happened to align with the training distribution. This whole field is a house of cards built on correlation, not causation.

Bharat Patel

February 27, 2026 AT 17:00I find this deeply beautiful - the idea that we don’t need to reprogram machines, just show them a few quiet examples of what we mean. It’s like teaching a child to tie their shoes by doing it beside them, not by writing a manual. The model isn’t ‘learning’ in the human sense - but it’s responding to rhythm, to pattern, to intention. There’s poetry in that. We’ve spent so long treating AI like a calculator, when really, it’s more like a mirror. Give it clarity, and it reflects clarity. Give it chaos, and it mirrors chaos. Maybe the real breakthrough isn’t in the prompt - it’s in us learning how to be clearer.

Bhagyashri Zokarkar

February 28, 2026 AT 23:34okay so i was trying this few shot thing with my chatbot for customer service and it kept saying "i dont know" even when i gave it like 7 examples and then i realized oh wait maybe its because the examples were too clean like no typos no slang no half sentences and real people dont talk like that so i threw in some messy ones like "u there??" "help plz" "its broken again lol" and suddenly it got way better?? like i swear it started understanding sarcasm?? idk if this is right but it worked??

Rakesh Dorwal

March 1, 2026 AT 06:33Let’s be real - this ‘few-shot’ nonsense is just Western tech firms trying to make AI look ‘accessible’ while hiding the fact that they’re still feeding models on billions of dollars’ worth of data. You think GPT-4 didn’t see millions of customer emails before you gave it 3 examples? Of course it did. This isn’t ‘in-context learning’ - it’s a PR stunt. And TF-IDF? That’s a relic from the era when Indian engineers were told to ‘optimize’ by cutting corners. If you want real performance, you need real training - not these cute little demos. We don’t need tricks. We need sovereignty. Build your own models. Train them on Indian data. Stop begging for scraps from Silicon Valley.

Vishal Gaur

March 1, 2026 AT 18:03so i tried the 2-5 examples thing like the post said and it worked kinda but then i added one more example because i was like ‘eh why not’ and suddenly the model started outputting random numbers and then i deleted the extra one and it went back to normal so yeah like the post said - less is more. also i think the order matters because when i put the hard example first it got confused but when i put easy ones first it was like ‘ohhh i get it now’ so yeah start simple. also my cat walked on my keyboard during one test and it gave a better answer?? not sure if that’s a thing or if i’m just delusional.

Nikhil Gavhane

March 2, 2026 AT 05:43This is one of the clearest, most thoughtful breakdowns of few-shot prompting I’ve seen. The part about chain-of-thought especially resonated - showing the reasoning steps feels like giving someone a map instead of just a destination. I’ve been using this with my students, and the difference is night and day. One student said, ‘It’s like the model finally stopped guessing and started thinking.’ That’s the moment you know this isn’t just technical - it’s pedagogical. Thank you for emphasizing quality over quantity. Too many people think more examples = better results. But you’re right: it’s about intentionality. Keep sharing this kind of insight. It matters.