Tag: LLMs

In-Context Learning Explained: How LLMs Learn from Prompts Without Training

In-Context Learning allows LLMs to adapt to new tasks using examples in prompts-no retraining needed. Discover how it works, its benefits, limitations, and real-world applications in AI today.

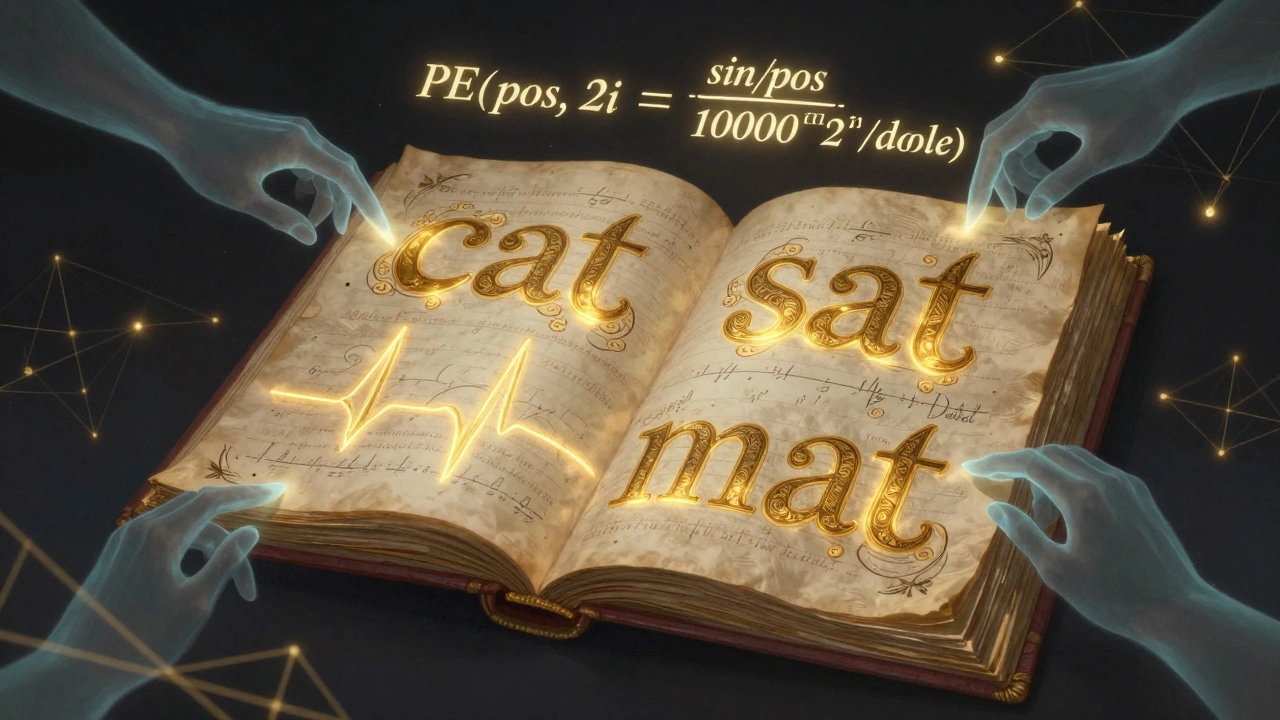

Positional Encoding in Transformers: Sinusoidal vs Learned for Large Language Models

Sinusoidal and learned positional encodings were the original ways transformers handled word order. Today, they're outdated. RoPE and ALiBi dominate modern LLMs with far better long-context performance. Here's what you need to know.