Tag: LLM inference costs

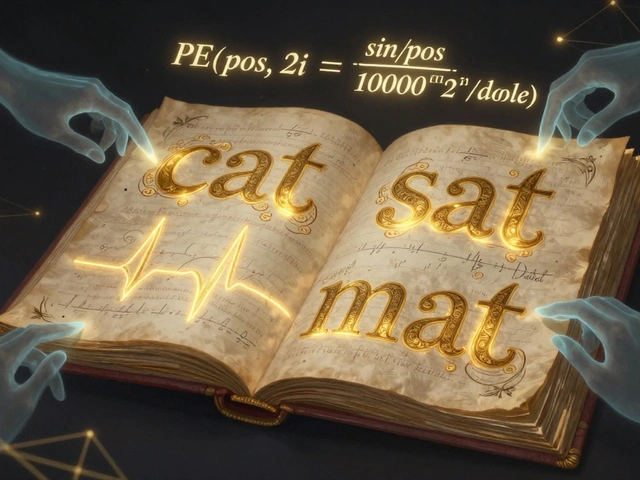

Cut RAG Costs: Optimize Embeddings, Storage, and Context Budgets

Discover how to cut RAG pipeline costs by optimizing LLM context budgets, embedding quantization, and vector storage. Learn why LLM inference dominates expenses and how to prioritize savings effectively.