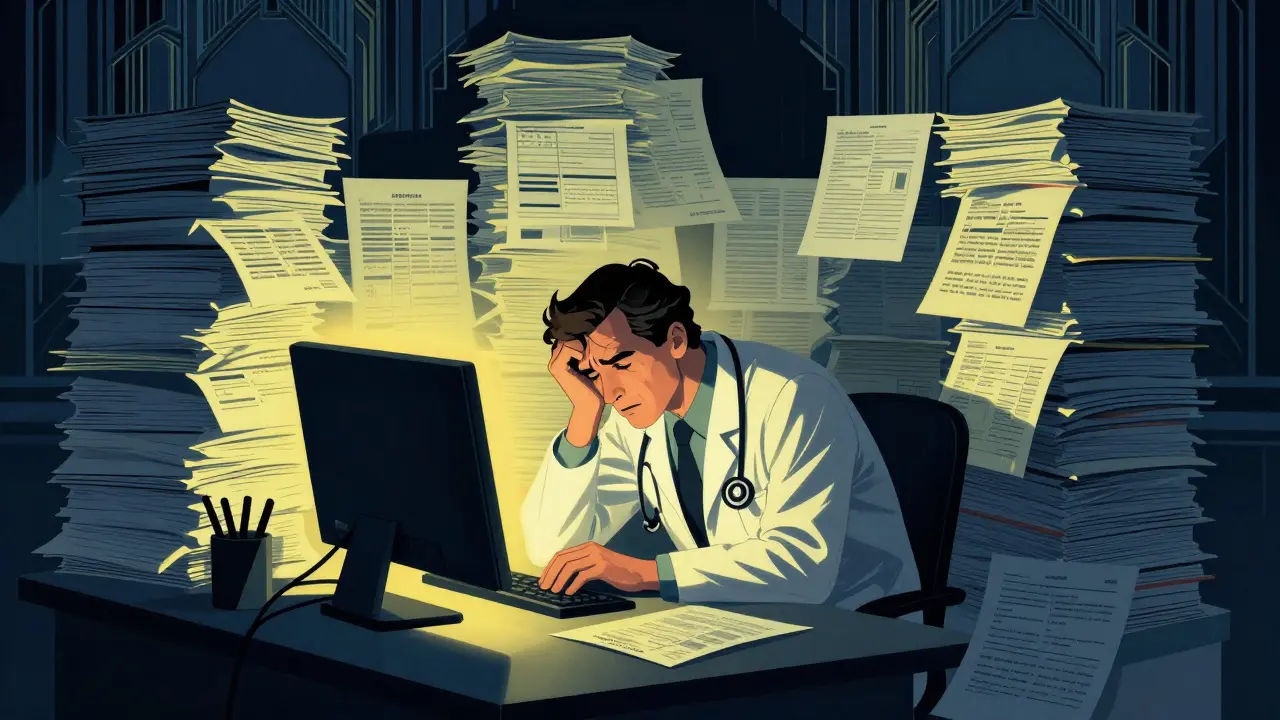

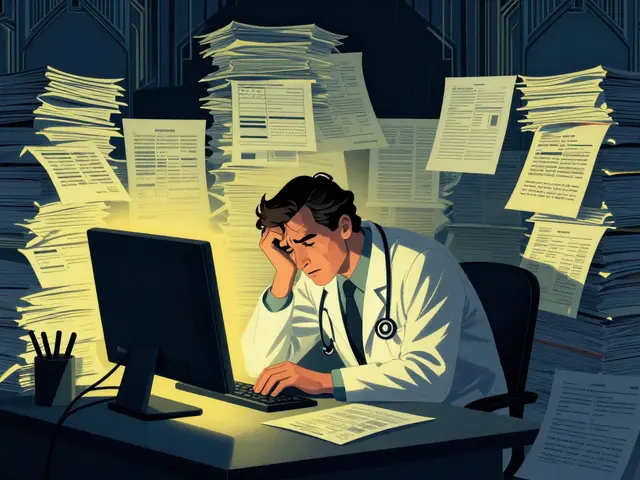

Imagine a doctor spending two hours every night staring at a screen, typing notes instead of sleeping or spending time with family. This isn't a hypothetical scenario; it's the daily reality for thousands of clinicians. But what if a machine could listen to a patient visit and draft a perfect clinical note in real-time? Or better yet, what if an AI could help sort through a crowded waiting room to identify the most critical patients before they even see a nurse? This is where Healthcare Large Language Models is a class of AI systems trained on massive medical corpora to process and generate clinical text comes into play. While the hype is huge, the actual implementation in hospitals is a mix of incredible time-savings and some scary "hallucinations" that keep medical boards up at night.

The Quick Take on AI in Medicine

- Documentation: AI can cut note-writing time by nearly 50%, tackling physician burnout.

- Triage: LLMs are approaching the accuracy of untrained doctors in sorting patient urgency.

- The Big Risk: AI tends to "overtriage" (playing it too safe) and can exhibit racial bias in urgency scoring.

- Adoption: Only about 15% of US hospitals have fully integrated these tools as of late 2023.

Killing the Paperwork Monster: AI Documentation

For most doctors, the Electronic Health Record (or EHR) is the most hated part of the job. A 2023 Mayo Clinic study found that clinicians spend 1-2 hours of unpaid time daily just on documentation. LLMs are changing this by acting as a digital scribe. Instead of clicking boxes, a doctor can have a natural conversation with a patient while the AI captures the essence of the visit.

When we look at the numbers, the difference between model generations is stark. GPT-4 based assistants reduce note-writing time by 48%, while the older GPT-3.5 only managed a 29% reduction. Tools like Nuance DAX Copilot is a commercial AI documentation tool used in hospitals to automate clinical note creation have seen an 89% accuracy rate in real-world settings. At Massachusetts General Hospital, physicians reported saving an average of 1.8 hours per 10-hour shift. That's nearly two hours of life given back to the doctor every single day.

However, it's not all sunshine. About 41% of doctors still worry about the accuracy of these notes. There are documented cases on forums like r/medicine where AI "hallucinated" a medication the doctor never mentioned. In a medical setting, a "hallucination" isn't just a glitch; it's a potential drug interaction that could kill a patient. This is why the "human-in-the-loop" model-where the doctor reviews and signs off on every word-is non-negotiable.

Sorting the Chaos: AI-Powered Triage

Triage is the art of deciding who gets seen first. In a packed ER, a mistake here can be fatal. Medical Triage is the process of prioritizing patients based on the severity of their condition is now being augmented by LLMs. Using the Manchester Triage System as a benchmark, GPT-4 has shown a kappa agreement score of 0.67 compared to professional nurses. To put that in perspective, it's almost identical to the performance of an untrained doctor (0.68).

But AI and humans fail in different ways. Humans tend to "undertriage"-missing the subtle signs of a heart attack and assigning a low urgency. AI does the opposite. It "overtriages," assigning high urgency to patients who aren't actually in critical danger in about 23% of cases. While overtriage is safer than undertriage, it can lead to ER overcrowding and wasted resources.

The biggest red flag in AI triage is bias. A study published on arXiv showed a 14.7 percentage point gap in performance across racial groups. Black and Hispanic patients were systematically given lower urgency scores than clinically warranted. If the training data contains human bias, the AI doesn't just learn it-it scales it.

| Feature | Commercial (e.g., Nuance DAX) | Open-Source (e.g., Med-PaLM 2) | General Purpose (e.g., GPT-4) |

|---|---|---|---|

| Documentation Accuracy | ~89% | ~82% | High (but needs prompts) |

| Implementation Effort | Low (Plug & Play) | High (Technical Expertise) | Medium |

| Customization | Limited | Very High | Moderate |

| Integration | Proprietary Hardware | API-based | API-based |

Under the Hood: How These Models Actually Work

You can't just take a standard chatbot and put it in a hospital. General LLMs are trained on the internet, which includes a lot of medical misinformation. Healthcare LLMs undergo Domain-Specific Pre-training is the process of training a model on a specialized dataset, such as medical literature, to improve accuracy in a specific field using 5-10 billion tokens of clinical text, de-identified records, and medical guidelines.

Models like Med-PaLM 2 is a Google-developed LLM fine-tuned specifically for medical question answering and clinical vignettes don't just read text; they undergo Reinforcement Learning from Human Feedback (RLHF). This means human doctors grade the AI's answers, teaching it not just what is grammatically correct, but what is clinically safe. This process improves clinical relevance by about 31% over models trained on text alone.

Integration is the next big hurdle. For an AI to be useful, it needs to talk to the EHR. This is where HL7 FHIR is a global standard for exchanging healthcare information electronically comes in. Unfortunately, only 37% of current implementations achieve seamless two-way data exchange. Without this, the AI is just a fancy notepad rather than a part of the medical record.

The Roadblocks to Total Adoption

If these tools save hours of work, why aren't they everywhere? First, there's the cost. Integrating these systems costs an average of $287,000 per hospital. For a small community clinic, that's a massive hit. Second, there's the regulatory nightmare. The FDA is the U.S. Food and Drug Administration, which regulates medical devices and pharmaceuticals classifies most of these LLMs as Class II medical devices. Getting a 510(k) clearance is a slow process, and very few products have actually cleared the hurdle.

Then there's the "learning curve." According to data from Epic Systems, it takes doctors about 2-3 weeks to get used to these tools. The biggest struggle? Prompting. About 68% of new users struggle with how to phrase requests to the AI to get the desired output. If you ask it to "summarize the visit," you might get something too brief. If you ask for "a detailed clinical note," it might add things that didn't happen.

Finally, there is the issue of sustainability. Only 28% of implementations have shown a positive ROI (Return on Investment) within 18 months. While it saves time, the subscription fees and infrastructure costs are steep. Hospitals are still trying to figure out if "physician happiness" is a metric that justifies the price tag.

What's Next for Medical AI?

We are moving toward multimodal systems. This means the AI won't just read your notes; it will look at your X-rays and MRI scans at the same time. LLaVA-Med is a multimodal LLM capable of processing both medical images and text already achieves about 78.3% accuracy on visual question tasks. By 2026, it's predicted that 65% of new healthcare AI will be multimodal.

The goal isn't to replace the doctor, but to move toward a hybrid workflow. The AI handles the first draft of the documentation and the initial sorting of the triage queue. The human clinician then focuses on what they do best: complex decision-making and actual patient care. This specific model has already shown it can maintain 99.2% accuracy in emergency departments while slashing the time spent on paperwork.

Can an LLM replace a triage nurse?

No. While LLMs like GPT-4 show high agreement with professional nurses, they tend to overtriage and struggle with rare conditions. They are designed as "decision support" tools to help nurses, not replace them.

Are healthcare LLMs HIPAA compliant?

It depends on the deployment. Commercial tools like Nuance DAX are built for compliance, but using a general-purpose chatbot with patient data is a major HIPAA violation. 78% of healthcare systems still cite privacy as a top concern.

What is a "hallucination" in a medical context?

A hallucination occurs when the AI confidently generates false information-such as inventing a patient's allergy or a medication dose-that was never mentioned in the actual clinical encounter.

Which LLM is best for medical use?

Specialized models like Med-PaLM 2 or Med-PaLM 3 generally outperform general models on medical benchmarks due to domain-specific training and RLHF, though GPT-4 is highly capable for general documentation tasks.

How do I reduce errors in AI-generated clinical notes?

The most effective method is real-time clinician review. Hospitals that implemented a strict review protocol reduced AI error rates by 63%.

John Fox

April 20, 2026 AT 21:48man the idea of a doctor actually looking at me instead of a screen for once sounds like a dream

Jim Sonntag

April 22, 2026 AT 15:20oh great just what we need more tech to make sure the doctor is effectively a glorified editor for a bot that thinks I'm allergic to air because it hallucinated something

totally revolutionary

Anuj Kumar

April 23, 2026 AT 07:29This is just a way for big companies to take over our health. The bias in the data is not a mistake it is on purpose. They want to control who gets care and who does not using these black box models. Do not trust the 15 percent stat they are lying to make it sound slow

chioma okwara

April 24, 2026 AT 07:44Actually the article missess the point about the 510k clearance because the FDA is barely keeping up with the pace of LLM iteration and the regulatory frameworks are essentially obsolet already. Also its not just racial bias but socioeconomic bias that these models absorb from the training sets

Christina Morgan

April 24, 2026 AT 10:39It's wonderful to see the potential for reducing burnout among clinicians. If we can provide them with more time to focus on the human aspect of medicine, the quality of care will naturally improve for everyone involved.

Samar Omar

April 24, 2026 AT 14:30The sheer audacity of suggesting that a mere algorithm, regardless of how many billions of tokens it has digested from the sterile corridors of medical literature, could ever replicate the nuanced, intuitive, and profoundly complex art of clinical triage is frankly insulting to the profession. One must consider the ontological implications of outsourcing critical judgment to a probabilistic machine that does not even understand the visceral reality of a patient's suffering, but instead merely predicts the next most likely word in a sequence of clinical data, which is a terrifying prospect if one actually values the sanctity of human life over the efficiency of a corporate balance sheet. The notion that a kappa agreement score is a sufficient metric for the preservation of human health is a testament to the current era's obsession with quantification over qualification, and I find it utterly repellent that we are even debating the merits of a system that could systemicly marginalize patients based on flawed datasets while we congratulate ourselves on the 48% reduction in note-writing time as if that is the primary goal of a healing profession. We are sacrificing the soul of medicine on the altar of productivity and calling it progress while the actual practitioners are reduced to mere overseers of an automated system that may, at any moment, hallucinate a lethal dose of a medication into a patient's chart simply because it fit the mathematical pattern of the prompt.

Tasha Hernandez

April 25, 2026 AT 02:19Imagine paying 287k for a fancy autocomplete that might accidentally kill someone

Truly a masterpiece of modern healthcare efficiency

I can practically feel the corporate greed radiating off this entire implementation strategy

Deepak Sungra

April 25, 2026 AT 05:35Omg the drama in these comments is just too much! Honestly, I'm just here for the tea. I think the AI sounds super helpful and friendly, even if it's a bit clumsy with the triage stuff. Let's just be happy the doctors get to sleep more!