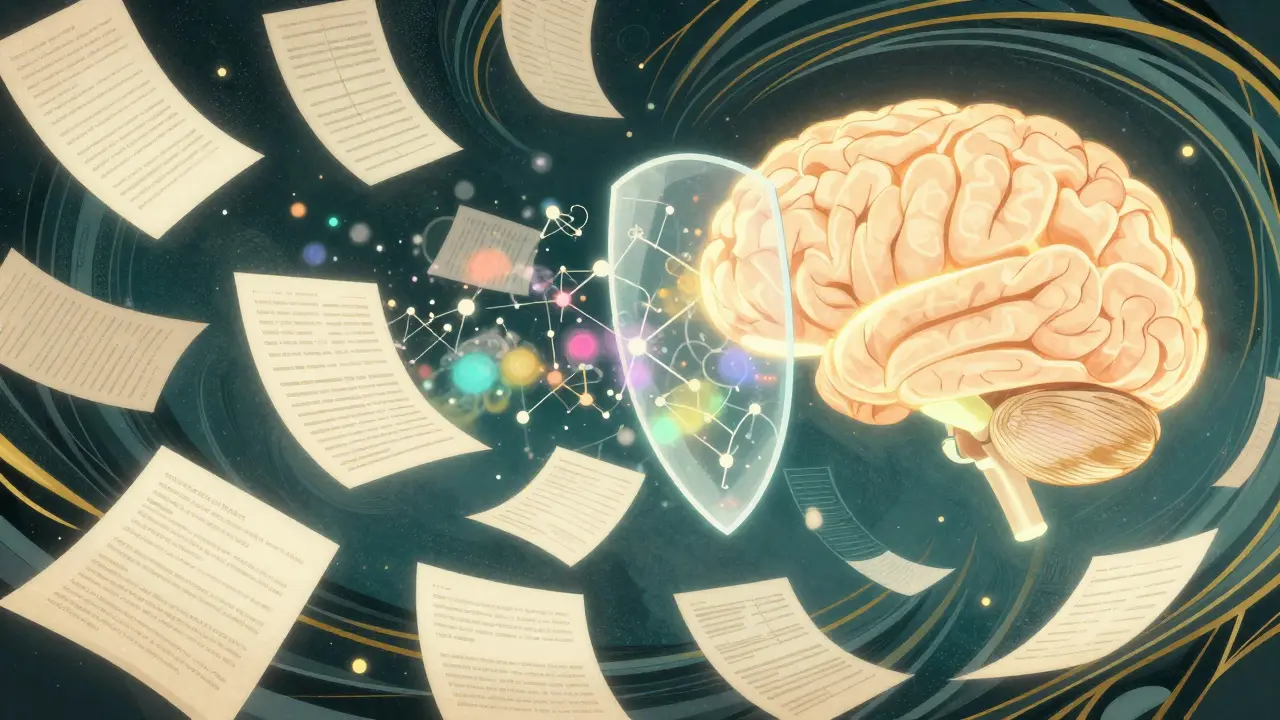

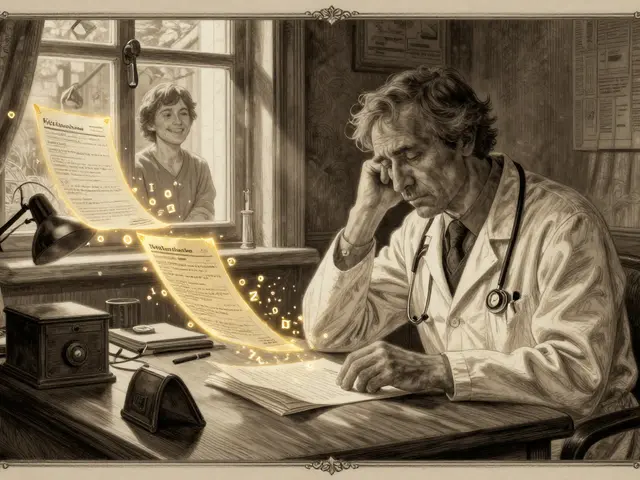

Imagine training a massive AI model on a dataset of medical records. The model becomes brilliant at diagnosing rare diseases, but there is a catch: it accidentally memorizes a specific patient's name and address. If a user asks the right sequence of questions, the model might spit out that private info. This isn't a glitch; it's a fundamental risk of how Large Language Models (LLMs) learn patterns. To stop this, engineers are turning to Differential Privacy (DP), a mathematical shield that lets models learn general trends without memorizing the specifics of any single individual.

What Exactly is Differential Privacy?

Differential Privacy is a mathematical framework that allows researchers to analyze a dataset while ensuring that the presence or absence of any single individual doesn't significantly change the outcome. Think of it as adding a calculated amount of "noise" to the data. If you're looking at a crowd, DP lets you see that "most people are wearing red shirts" without letting you identify exactly which person is wearing a specific shade of crimson.

This isn't just a vague suggestion; it's based on rigorous math established by Cynthia Dwork and her team. Unlike basic anonymization-where you just remove names and hope for the best-DP provides a provable guarantee. Even if an attacker has access to other external databases, they cannot mathematically "reverse engineer" the private data of a person in the training set.

The Mathematical Knobs: Epsilon and Delta

When you implement DP in LLM training, you aren't just flipping a switch. You're tuning a differential privacy "budget" using two key parameters: Epsilon (ε) and Delta (δ).

- Epsilon (ε): This is the primary privacy budget. A small epsilon (like ε=1) means very high privacy but adds more noise, which can make the model less accurate. A larger epsilon (like ε=8) allows for better model performance but increases the risk that some private information could leak.

- Delta (δ): This represents the small probability that the privacy guarantee might fail. Ideally, this number is kept as close to zero as possible.

For most companies, the sweet spot is a tough call. Regulatory bodies like the European Data Protection Board have suggested that ε ≤ 2 is a strong marker for GDPR compliance, but achieving that without destroying the model's utility is where the real struggle begins.

How It Actually Works: DP-SGD

The heavy lifting in private training is usually done by DP-SGD (Differentially Private Stochastic Gradient Descent). In a normal training loop, the model calculates gradients (the direction it needs to move to improve) and updates its weights. In DP-SGD, two critical steps are added:

- Gradient Clipping: The system limits the influence of any single training example. If one specific piece of data is "too loud" and tries to pull the model too far in one direction, the system clips it to a maximum norm (typically between 0.1 and 1.0).

- Noise Addition: The system adds random Gaussian noise to the clipped gradients. This masks the contribution of any single individual, ensuring the model learns the "average" pattern rather than the unique outlier.

While this sounds simple, it's computationally expensive. Because DP-SGD requires calculating gradients for individual samples rather than batches, you lose the efficiency of GPU parallel processing. This often leads to training times that are 30-50% longer than standard training.

| Feature | Standard Training | DP-SGD Training |

|---|---|---|

| Privacy Guarantee | None (Risk of memorization) | Mathematically provable (ε, δ) |

| Training Speed | Fast (Optimal GPU usage) | Slow (30-50% increase in time) |

| Memory Usage | Standard | High (20-40% increase) |

| Accuracy | Maximum | Slight drop (typically 2-15%) |

The Tradeoffs: Privacy vs. Utility

You can't get something for nothing. The "Privacy-Utility Tradeoff" is the central conflict of private ML. If you want a model that is perfectly private, you might end up with a model that is basically useless because the noise has drowned out the actual patterns.

In practical terms, a model trained with ε=3 might see a 5-15% drop in accuracy on standard NLP benchmarks. However, if you push the budget to ε=8, you can often get the accuracy within 2-3% of a non-private model. The real problem arises with "long-tail" data. DP is great at preserving common patterns but terrible at preserving rare facts. If your model needs to know an obscure medical term that only appears twice in a billion records, DP will likely treat that as "noise" and erase it.

Scaling to Billions of Parameters

For a long time, DP was only viable for small models. Trying to run DP-SGD on a 70-billion parameter model would crash almost any server due to memory constraints. Enter DP-ZeRO. Developed by researchers like Bu et al., this framework integrates with DeepSpeed's Zero Redundancy Optimizer to shard the model across multiple GPUs.

This breakthrough allows for the training of models with billions of parameters without requiring a supercomputer. Google has also experimented with Synthetic Data pipelines, where they use DP to create a "fake" version of a dataset that maintains the statistical properties of the original without containing any real individual's data. This synthetic data is then used to fine-tune LLMs, providing a secondary layer of protection.

Practical Tips for Implementation

If you're an engineer diving into this, be prepared for a steep learning curve. Most developers report spending several weeks just tuning hyperparameters before the model becomes stable. Here are a few rules of thumb based on community experience:

- Start Loose: Begin your initial experiments with a higher epsilon (ε=8 to 10). Once you know the model can actually learn the task, start tightening the budget.

- Watch Your Norms: Experiment with clipping norms between 0.1 and 1.0. If your gradients are too clipped, the model won't learn; if they aren't clipped enough, your privacy guarantee vanishes.

- Use the Right Tools: While Opacus is a popular library from Meta, it can struggle with models exceeding 1 billion parameters due to sharding issues. For larger scales, look toward Google's DP libraries or the DP-ZeRO implementation.

Does differential privacy completely eliminate all privacy risks?

No. While it provides the strongest mathematical guarantee against re-identification, it's not a silver bullet. Models can still be vulnerable to membership inference attacks, though DP significantly mitigates them compared to non-private training. It protects the individuals in the data, but it doesn't necessarily stop the model from leaking general sensitive patterns learned from the group.

Why is DP-SGD slower than regular SGD?

Regular SGD averages gradients across a batch, which is incredibly fast on GPUs. DP-SGD requires calculating a separate gradient for every single example in that batch so that they can be clipped individually before being averaged. This removes the efficiency of batching and adds significant computational overhead.

What is a 'good' epsilon value for a production LLM?

It depends on the industry. For maximum privacy and strict regulatory compliance (like healthcare or government), ε=1 to 3 is the target. However, many enterprise production models operate in the ε=4 to 8 range to maintain a reasonable balance between data protection and the model's ability to follow complex instructions.

Can I use DP for training trillion-parameter models?

Currently, this is a major bottleneck. Researchers at Stanford have warned that current DP methods may be computationally infeasible for trillion-parameter models without new algorithmic breakthroughs. While DP-ZeRO helps, the memory and time overhead still scale poorly at that extreme magnitude.

How does DP differ from data masking or anonymization?

Masking and anonymization (like removing names) are heuristic-based. They are often vulnerable to "linkage attacks," where an attacker combines the anonymized data with another public dataset to re-identify people. Differential Privacy is mathematical; it ensures that the output is statistically similar whether or not a specific person's data was included, making it resistant to such attacks.

What's Next for Private AI?

The industry is moving toward a world where DP is a standard part of the pipeline, not an afterthought. We can expect tighter composition theorems in the coming years-which is a fancy way of saying we'll find ways to train models longer without "spending" the privacy budget as quickly. We're also seeing the rise of hardware acceleration specifically designed to handle the per-sample gradient calculations that make DP-SGD so slow.

If you're building in a regulated space, your next step should be auditing your data pipeline. Determine if your risk profile requires a strict ε ≤ 3 guarantee or if a more relaxed budget suffices. From there, explore DP-ZeRO for scaling or start with smaller models using Opacus to understand how noise affects your specific domain's accuracy.

Jeremy Chick

April 7, 2026 AT 00:10This whole "privacy budget" thing sounds like a joke. Just tell us if the model is leaking data or not instead of hiding behind Greek letters and math jargon. Either it's secure or it's not, and usually, it's not.

Sagar Malik

April 8, 2026 AT 01:27The epistemologicalll failure of this discourse is palpable. You're all ignoring the ontic shift in data ownership. This "noise" is just a heuristic veil to hide the fact that these corpora are essentially digital panopticons. The algorithmic opacity of DP-SGD is a mere facade for the systemic erosion of the individual's cognitive sovereignty. It's practically a cybernetic gaslighting technique to make us feel safe while our latent patterns are still being harvested by the hegemony. Purely a reductionist approach to an existential crisis of privacy.

Rahul U.

April 9, 2026 AT 15:53The explanation of the epsilon value is quite helpful! 🌟 It is interesting to see the tension between regulatory requirements and actual utility. I wonder how this will evolve with newer hardware 🚀

E Jones

April 9, 2026 AT 20:13Oh please, let's be real for a second here. This "mathematical shield" is probably just a backdoor for the shadow government to decide exactly how much of our souls they want to scrub from the record while keeping the parts that let them predict our every move with terrifying precision. They'll tell you epsilon is 2, but in some dark room in Virginia, it's actually a variable tied to your social credit score, and they're just using these fancy Gaussian noise generators to camouflage the massive psychic vacuum they've built into the very architecture of the internet. It's a digital spiderweb designed to swallow our identities whole while we're all nodding along to some "provable guarantee" written by people who probably don't even exist in the real world!

Seraphina Nero

April 10, 2026 AT 13:21It sounds really hard to get the balance right.

selma souza

April 12, 2026 AT 04:27The lack of precision in some of the discussions here is appalling. One must adhere to the rigorous definitions provided in the text if they wish to engage in a meaningful dialogue. The trade-off between utility and privacy is not a matter of opinion but a mathematical certainty.

Megan Ellaby

April 12, 2026 AT 16:15Tuning those hyperparameters sounds like a nightmare lol. I bet most peple just give up and use a higher epsilon just to get the thing to actually work. its such a struggle when you're just trying to build something useful!

Barbara & Greg

April 12, 2026 AT 23:58It is a moral imperative that we prioritize the sanctity of individual data over the mere efficiency of a machine. The pursuit of model performance at the cost of human privacy is a manifestation of a decadent society that values utility over dignity. We must ask ourselves if the convenience of a slightly more accurate diagnosis justifies the systemic risk of exposure. True progress is not measured by the size of a parameter count, but by the ethical framework that governs its use. To compromise on the privacy budget is to compromise on the very essence of human autonomy.