Stop relying on "vibes" to decide if your AI prompt is working. We've all been there: you tweak a few words in a prompt, run it once, it looks great, and you ship it. Then, a week later, you realize the model is hallucinating on 20% of your users' requests. The problem is that intuition doesn't scale. When you're moving from a few curious users to thousands, you need a way to prove that prompt A/B testing is a controlled experimentation methodology that compares two or more prompt variants to identify which version produces superior outputs based on data . If you want your AI features to actually improve over time, you have to stop guessing and start measuring.

The Problem with "Vibe Checking"

In the early days of generative AI, most people did what's known as "vibe checking." You enter a prompt into a chat interface, look at the result, and decide if it "feels" right. This works for a hobbyist, but it's dangerous for a business. Why? Because LLMs are stochastic-they can give a brilliant answer one time and a mediocre one the next using the exact same prompt.

To get real results, you need to move toward a data-driven approach. Research shows that about 55% of users find experimenting with different prompts to be the most effective way to make AI work for their specific use case. The goal is to transform the subjective feeling of quality into an objective metric. You aren't just asking "Is this better?" You're asking "Does Variant B increase my conversion rate by 2% compared to Variant A across 1,000 samples?"

What Exactly Are You Testing?

When people say "A/B test a prompt," they often think it's just about changing the wording. In reality, there are four main levers you can pull. If you only test the words, you're missing most of the performance gains.

- System Instructions: This is the core persona. You might test whether telling the model it is a "world-class senior software engineer" produces better code than telling it to be a "helpful coding assistant."

- Context Window & RAG: If you use Retrieval-Augmented Generation (or RAG), you can test how much data you feed the model. Does providing five search results yield better answers than providing three?

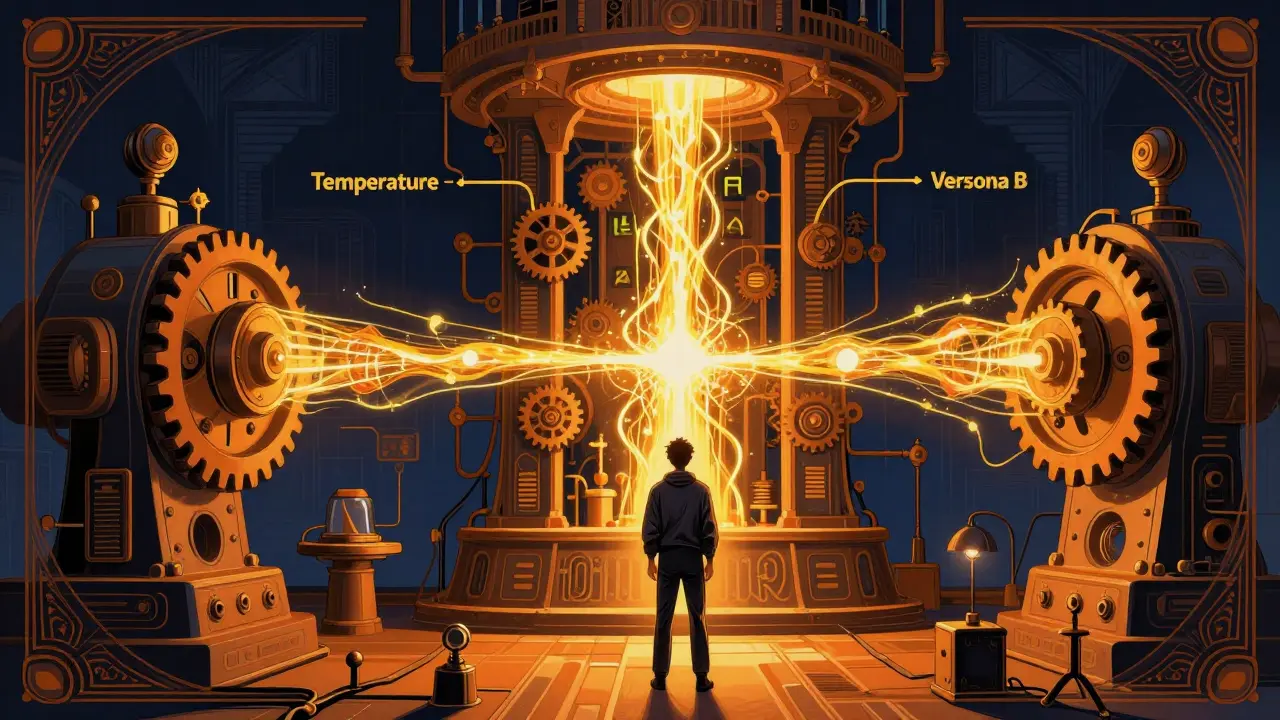

- Model Parameters: You can test Temperature (which controls randomness) or top-p. A lower temperature is usually better for factual extraction, while a higher one helps with creative writing.

- Model Architecture: Sometimes the prompt isn't the problem-the model is. You might test GPT-4o against Claude 3.5 Sonnet to see which one follows your complex instructions more reliably.

| Variable | What it Changes | Best For... |

|---|---|---|

| Persona | Tone and expertise level | Improving brand voice |

| Few-Shot Examples | Pattern recognition | Complex formatting/JSON |

| Temperature | Predictability vs Creativity | Reducing hallucinations |

| Model Version | Reasoning capabilities | Scaling cost vs quality |

A Framework for Scaling Your Experiments

Scaling experimentation requires a pipeline, not a spreadsheet. A professional workflow usually follows four distinct stages to ensure that improvements are permanent and don't break other things.

First is the Playground stage. This is where you do side-by-side comparisons. You run ten different prompts against the same ten inputs and see the results in a grid. You're looking for a "winner" based on a quick scan of quality, latency, and token cost. If a prompt is 10% better but uses 50% more tokens, it might not be the right choice for a high-traffic app.

Once you find a winner, you move to the Experiment stage. Here, you create an immutable record of that prompt. You aren't just saving it in a doc; you're versioning it. This prevents the "what did I change last Tuesday?" panic when a model update suddenly degrades your output.

The third stage is CI/CD Integration. This is where the real engineering happens. You treat your winning prompts as quality gates. Before a new prompt goes live, it must pass a test against a "golden dataset" (a set of inputs where you already know the perfect answer). If the new prompt causes a regression in accuracy, the deployment is blocked automatically.

Finally, you have the Production Rollout. Instead of switching everyone to the new prompt instantly, you use feature flags to send 5% of your traffic to the new version. You monitor real-user feedback-like "thumbs up/down" buttons-to confirm that the laboratory success translates to real-world value.

Measuring Success: Objective vs. Subjective Metrics

This is the hardest part of prompt design. How do you actually "score" a paragraph of text? You have to balance two types of metrics.

Subjective metrics are based on human judgment. You might bring in a team of copywriters to rate responses on a scale of 1 to 5. This is high-quality but doesn't scale. You can't have a human read 10,000 responses every time you change a comma in your prompt.

Objective metrics are hard numbers. These include things like:

- Factuality: Did the model mention a product we don't sell?

- Formatting: Did it return valid JSON that our app can actually parse?

- Latency: Did the response take 2 seconds or 10 seconds?

- Conversion: Did the user click the "Buy" button after this AI interaction?

To bridge the gap, many teams now use the LLM-as-a-Judge pattern. This is where you use a more powerful model (like GPT-4o) to grade the outputs of a smaller, faster model. You give the judge model a strict rubric-for example, "Score this response from 1-5 based on how helpful it is, penalizing any mention of competitors"-and let it process thousands of examples in minutes.

Avoiding Common Experimentation Traps

Even with a framework, it's easy to trick yourself into thinking a prompt is better than it is. Watch out for length bias. Humans (and even LLM judges) tend to rate longer answers as "better" or "more thorough," even if the longer answer contains more fluff or incorrect information. To fight this, always include a constraint in your judge's rubric to penalize unnecessary verbosity.

Another danger is data contamination. If you use the same set of prompts and questions to "tune" your AI and then use that same set to "test" it, you're essentially giving the model the answers to the test. Always keep your evaluation dataset separate from your development data.

Lastly, avoid the "one-and-done" mentality. The first iteration of a prompt is almost never the best. Treat prompt optimization like a scientific experiment: form a hypothesis (e.g., "Adding a step-by-step reasoning instruction will reduce errors"), test it, analyze the data, and refine. It's an iterative loop, not a straight line.

How do I know when a prompt is "statistically significant"?

You shouldn't rely on a handful of examples. For a result to be significant, you need a large enough sample size (often hundreds or thousands of requests) and a clear delta in your primary metric (like a 5% increase in user satisfaction). Use a T-test or a similar statistical method to ensure the improvement isn't just due to random chance.

Can I A/B test prompts across different LLM providers?

Yes, and you should. Because different models (e.g., Claude vs Gemini) have different training biases, a prompt that works perfectly for one might fail for another. The best approach is to create a "model-agnostic" prompt and then create specialized variants for each provider to maximize their unique strengths.

What is the best tool for prompt versioning?

While you can use Git, specialized LLM evaluation platforms like Braintrust or PostHog are better because they track the output, latency, and cost alongside the prompt version. These tools allow you to snapshot a prompt and run it against a dataset instantly without writing new code.

How do I stop the model from being too verbose in A/B tests?

Include a "conciseness" constraint in your system prompt (e.g., "Answer in 3 sentences or less"). When evaluating, use a character count or word count metric as a secondary KPI to ensure the model isn't just winning the "vibe check" by writing a novel.

What is a "golden dataset" in prompt testing?

A golden dataset is a curated collection of inputs and their "perfect" corresponding outputs, verified by human experts. It serves as the ground truth. Every time you change a prompt, you run the dataset through the AI and measure how close the new outputs are to the golden answers.

Next Steps for Your AI Workflow

If you're just starting, don't try to build a full CI/CD pipeline on day one. Start by picking one high-impact feature and creating three prompt variants: a control (what you have now), a persona-based variant, and a few-shot variant (adding examples). Run these against 50 real user queries and use an LLM-as-a-Judge to score them. Once you see the lift in quality, you'll have the justification to invest in more robust tooling and a formal experimentation framework.