You built a brilliant Large Language Model. You trained it on your proprietary data. But who else sees that data? If you’ve handed access to vendors-whether for fine-tuning, inference hosting, or customer support integration-you’ve just opened the door to a new kind of risk. This isn’t just about traditional data breaches anymore. It’s about model poisoning, prompt injection attacks, and intellectual property theft hidden inside API calls.

In 2026, the landscape has shifted dramatically. Regulators like the EU with its AI Act and the US with sector-specific guidelines are demanding proof that your third parties aren’t leaking your core assets. General Third-Party Risk Management (TPRM) tools are no longer enough. You need strategies specifically designed for the unique threats posed by Generative AI workflows.

Why Traditional TPRM Fails for LLMs

Most companies treat LLM vendors like standard software providers. They run a generic security questionnaire, check for SOC 2 Type II certification, and sign a contract. That approach works for email servers. It fails for AI models.

The problem is complexity. When a vendor hosts your LLM, they don’t just store static files. They process dynamic inputs. Your sensitive customer data might be fed into the context window during an inference request. If the vendor’s system is compromised, attackers can use Prompt Injection a technique where malicious inputs trick an AI model into revealing restricted information or performing unauthorized actions to extract your training data. Worse, if the vendor allows other clients to share resources without proper isolation, your model could be inadvertently influenced by their data-a phenomenon known as cross-contamination.

Traditional audits look at perimeter defenses. They rarely inspect the internal logic of how data flows through neural networks. You need to assess not just if the vendor is secure, but if their architecture prevents data leakage at the token level.

Key Risks in Vendor-Managed LLM Environments

To manage risk effectively, you first have to name it. Here are the specific threats that emerge when third parties handle your LLM data:

- Data Exfiltration via Inference: Attackers craft queries designed to force the model to repeat parts of its training set. If the vendor doesn’t monitor output patterns, this goes unnoticed.

- Model Poisoning: Malicious actors inject biased or harmful data into the training pipeline. If your vendor manages the fine-tuning process, they become the attack vector.

- Supply Chain Compromise: Many LLMs rely on open-source libraries. If a vendor uses outdated or unverified dependencies, your entire stack becomes vulnerable.

- Intellectual Property Theft: Vendors may retain copies of your prompts or outputs for their own analytics, potentially training competing models on your proprietary insights.

These risks require more than a checklist. They demand continuous monitoring and technical validation.

Essential Contractual Safeguards

Your legal agreements are your first line of defense. Standard NDAs and SLAs are insufficient. You need clauses that address the nuances of AI processing.

First, define "Data Ownership" explicitly. State that all inputs, outputs, and derived weights belong to you. Prohibit the vendor from using your data to improve their base models unless you provide explicit written consent. Second, include "Audit Rights" that allow you to inspect their infrastructure logs. You should have the right to verify that your data is isolated from other clients’ workloads.

Third, mandate "Incident Response Protocols" specific to AI. If a prompt injection attempt is detected, the vendor must notify you within hours, not days. Include penalties for failure to detect known vulnerabilities in their LLM orchestration layer. These terms shift the burden of proof onto the vendor, ensuring they maintain rigorous security standards.

Technical Controls for Vendor Assessment

Contracts alone won’t stop a breach. You need technical controls that verify the vendor’s claims. Modern TPRM platforms now offer AI-specific assessments, but you must know what to ask for.

Look for vendors who implement Zero Trust Architecture a security framework requiring strict identity verification for every person and device trying to access resources on a private network. This means no implicit trust between services. Every API call must be authenticated and authorized. Ask for evidence of end-to-end encryption for data in transit and at rest. More importantly, ask about encryption keys. Who holds them? If the vendor holds the keys, they can technically read your data. Ideally, you should manage your own keys through a Bring Your Own Key (BYOK) solution.

Another critical control is input/output filtering. Reputable vendors deploy guardrails that scan prompts for malicious intent and responses for sensitive data leaks. Request documentation on these filters. Do they block personally identifiable information (PII)? Do they detect attempts to extract code snippets? If the vendor cannot demonstrate active filtering, the risk is too high.

Evaluating Vendor Security Postures

Not all vendors are created equal. Some specialize in enterprise-grade AI security, while others prioritize speed over safety. Use this comparison to guide your evaluation:

| Capability | Basic Vendor | Advanced Vendor |

|---|---|---|

| Data Isolation | Multitenant shared infrastructure | Dedicated instances or virtual private clouds |

| Encryption | Standard TLS 1.3 | TLS 1.3 + BYOK + Homomorphic Encryption options |

| Auditing | Annual SOC 2 report | Real-time logging + Customer-accessible audit trails |

| AI Guardrails | None or basic keyword filtering | Advanced semantic analysis + PII redaction |

| Compliance | GDPR general compliance | AI Act ready + NIST AI RMF alignment |

If a vendor falls into the "Basic" category for any row marked as critical for your industry, proceed with caution. For healthcare or finance, only "Advanced" capabilities should meet your threshold.

Continuous Monitoring Strategies

Risk doesn’t end at onboarding. The threat landscape evolves daily. New vulnerabilities in popular AI frameworks like PyTorch or TensorFlow emerge regularly. Your vendor must patch these quickly.

Implement continuous monitoring tools that integrate with your vendor’s APIs. Look for platforms that offer automated risk scoring based on public threat intelligence. If a vendor’s IP address appears in a dark web forum or a known botnet, your system should alert you immediately. Additionally, monitor for unusual usage patterns. Sudden spikes in API calls or large data exports can indicate insider threats or compromised credentials.

Regularly re-assess your vendors. Don’t wait for annual reviews. Trigger reassessments whenever there’s a significant change in their service offering, ownership structure, or security incidents. This proactive approach keeps you ahead of potential breaches.

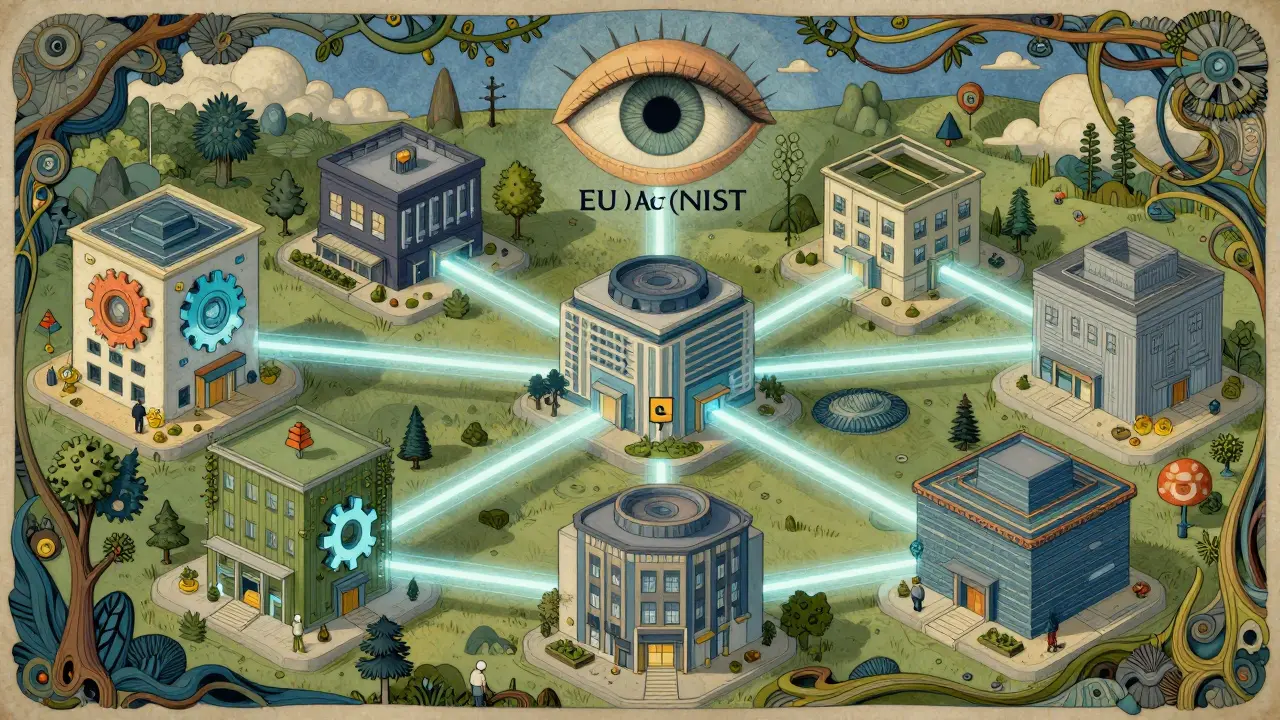

Navigating Regulatory Requirements

Regulations are catching up to technology. The EU AI Act comprehensive legislation regulating artificial intelligence systems in the European Union, focusing on risk-based approaches requires high-risk AI systems to undergo conformity assessments. If your vendor handles data for EU citizens, they must comply. Similarly, the NIST AI Risk Management Framework a voluntary framework providing guidance for managing risks associated with AI systems offers best practices for transparency and accountability.

Ensure your vendor maps their controls to these frameworks. Ask for evidence of compliance. Do they document data lineage? Can they prove that your data wasn’t used to train their base models? Regulatory bodies will scrutinize your supply chain. If your vendor fails, you fail. Make sure their compliance posture matches yours.

Building a Resilient Vendor Ecosystem

Finally, diversify your vendor base. Relying on a single provider for LLM infrastructure creates a single point of failure. Partner with multiple vendors who offer different security strengths. This distribution reduces exposure to any one vendor’s weaknesses. Establish clear exit strategies. Ensure you can migrate your models and data without lock-in. This flexibility empowers you to switch providers if security standards slip.

What is the biggest risk when outsourcing LLM operations?

The biggest risk is data exfiltration through prompt injection or model inversion attacks. Unlike traditional databases, LLMs can unintentionally reveal training data if not properly secured. Vendors must implement robust input/output filtering to prevent this.

How do I verify a vendor's AI security claims?

Request detailed technical documentation on their isolation mechanisms, encryption standards, and guardrail implementations. Look for third-party audits specifically focused on AI workloads, not just general IT security. Real-time access to audit logs is also a strong indicator of transparency.

Are standard NDAs enough for LLM vendors?

No. Standard NDAs don't address technical realities like model weight retention or inference data usage. You need specific clauses prohibiting the use of your data for model improvement and defining clear ownership of all generated outputs.

What does 'data isolation' mean in the context of LLMs?

Data isolation ensures that your inputs and outputs are processed separately from other clients' data. This prevents cross-contamination where one client's data influences another's model responses. Dedicated instances or virtual private clouds provide the highest level of isolation.

How often should I reassess my LLM vendors?

At least annually, but ideally continuously using automated monitoring tools. Reassess immediately after any major security incident, regulatory change, or significant update to the vendor's service architecture. Proactive monitoring catches issues before they become breaches.