Imagine asking an AI about your company's specific refund policy, only for it to confidently invent a 90-day window that doesn't actually exist. This is the "hallucination" problem, and it's the biggest wall standing between a cool demo and a tool that businesses can actually trust. To fix this, we use a technique called grounding prompts is the process of connecting large language model outputs to verifiable, real-world data sources to ensure accuracy and contextual relevance . By anchoring the AI in "truth," you stop it from guessing and start getting answers based on your own documents.

Key Takeaways for Grounding AI

- RAG is the standard: Retrieval-Augmented Generation is the primary way to implement grounding today.

- Slashes Hallucinations: Grounding can drop hallucination rates from 27% in standard models to as low as 3% in advanced implementations.

- Data Quality Matters: AI is only as good as the documentation it retrieves; messy data leads to poor grounding.

- Better than Fine-Tuning: RAG allows for real-time data updates without the massive cost of retraining models.

What Exactly is Retrieval-Augmented Generation (RAG)?

If you want an AI to know things it wasn't trained on-like your private emails, latest product specs, or yesterday's sales figures-you can't just hope it "remembers" them. You need Retrieval-Augmented Generation, or RAG, a framework that retrieves relevant documents from an external knowledge base and feeds them to the AI as context before it generates a response.

Think of it like an open-book exam. A standard LLM is like a student trying to answer from memory; they might get the gist right, but they'll likely mess up the specific dates or numbers. A grounded AI is a student with a textbook open to the exact page needed. It doesn't have to memorize everything; it just needs to know how to find the right information and summarize it accurately.

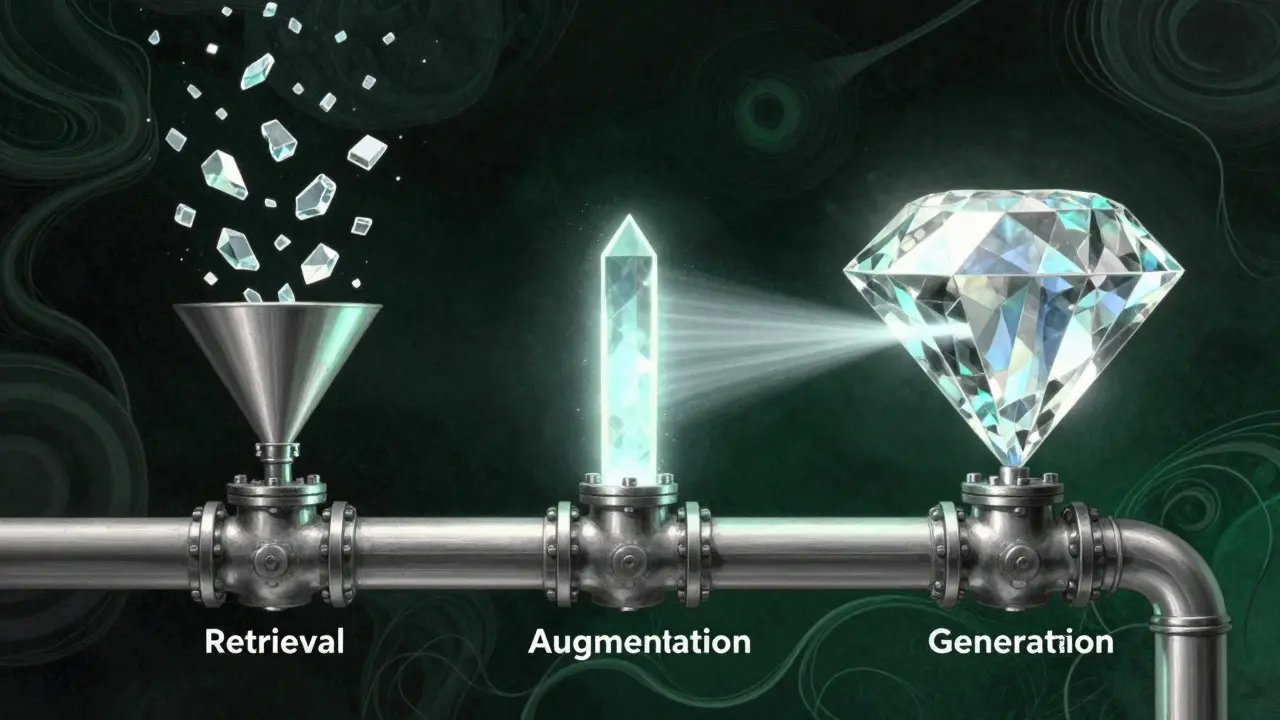

How Grounding Works: The Three-Stage Architecture

Grounding isn't just one action; it's a pipeline. Most enterprise systems follow a specific three-step flow to ensure the AI doesn't drift off-topic.

- Retrieval: The system takes the user's question and searches a knowledge base. It typically uses vector similarity search, which converts text into mathematical coordinates (vectors). If the question and a piece of data are mathematically "close" (often using a cosine similarity threshold between 0.7 and 0.85), the system grabs that data.

- Augmentation: The retrieved text is stuffed into the prompt. Instead of just asking "What is our policy?", the prompt becomes "Based on the following excerpt from the Employee Handbook: [Insert Text], what is our policy?" Most systems limit this added context to about 3,072 tokens to avoid overwhelming the model.

- Generation: The AI reads the provided context and writes the answer. Because the prompt explicitly tells the AI to use the provided text, the model is constrained, significantly reducing the chance it will make things up.

RAG vs. Fine-Tuning: Which Path to Take?

When people realize their AI is lying, they often ask if they should just "train the model" on their data. This is called fine-tuning. While fine-tuning is great for teaching an AI a specific style or a niche jargon, it's usually the wrong choice for factual grounding.

| Feature | RAG (Grounding) | Fine-Tuning (LoRA/Full) |

|---|---|---|

| Update Speed | Instant (just update the doc) | Slow (requires retraining) |

| Cost | Lower (database costs) | Higher ($15k-$50k per model) |

| Transparency | High (can cite sources) | Low (black box) |

| Hallucination Risk | Low (anchored in text) | Moderate (still guesses) |

Real-World Impact on Enterprise Trust

The numbers back up why grounding is becoming a requirement for any business AI. A 2023 Forrester report found that grounding leads to a 63% reduction in inaccurate responses. For a customer service bot, that's the difference between a happy customer and a PR nightmare.

Take Salesforce and their Einstein GPT implementation. Before grounding, an AI might write a generic sales email. After grounding it in CRM data, the AI can reference a specific detail, like "I saw you bought 1,200 pairs of sneakers last quarter," making the outreach feel human and informed rather than robotic.

However, it's not a magic wand. The success of grounding depends heavily on the quality of your data. Users on platforms like Reddit have noted that RAG works great with clean, structured PDFs but struggles with "messy" internal chat logs. If your data is a disaster, your grounded AI will simply be a very efficient way to deliver incorrect information.

Common Pitfalls and How to Avoid Them

Even with the best tools, grounding can trip up. If you're implementing this, keep an eye out for these three common issues:

- The Context Window Limit: AI models can only "read" so much at once. If you retrieve too much data, the end of the text might get cut off. To fix this, experts use hierarchical chunking-breaking documents into smaller, logically linked pieces.

- The Homonym Problem: Vector search can be fooled. If you search for "Apple" (the company) but your database has a lot of text about fruit, the AI might retrieve a recipe for apple pie. To stop this, use hybrid search, which combines traditional keyword matching with vector similarity.

- Security Leakage: Once you connect your AI to your private data, that data becomes a target. Some enterprises have reported data leakage during RAG setup. The fix is field-level encryption and strict access controls so the AI only "sees" what the specific user is allowed to see.

Does grounding completely stop AI hallucinations?

Not completely, but it drastically reduces them. While a standalone model like PaLM 2 Chat might hallucinate 27% of the time, a grounded system can bring that down to around 3%. The AI can still occasionally misinterpret the retrieved text, but it's far less likely to invent facts out of thin air.

How long does it take to set up a grounded AI system?

For large enterprises, it typically takes 3 to 6 months. The bulk of this time isn't spent on the AI itself, but on "data cleaning"-structuring old PDFs, spreadsheets, and documents so the retrieval system can find them easily.

What are the best databases for RAG?

You need a vector database to handle the embeddings. Popular choices include Pinecone, Weaviate, and FAISS. These tools allow the system to perform the fast mathematical comparisons needed to find relevant context in milliseconds.

Can I use grounding for highly technical fields like medicine?

It's possible, but risky. Some studies, including one from JAMA Internal Medicine, showed a 31% failure rate in medical diagnosis support systems. Grounding is great for facts, but complex reasoning in high-stakes technical fields still requires human oversight.

Is RAG better than just increasing the context window?

Yes. Even if a model can handle 100k tokens, feeding it everything is expensive and slow. RAG is more efficient because it only sends the most relevant 1% of your data to the model, keeping latency low and costs manageable.

Next Steps for Implementation

If you're ready to stop the hallucinations, start with a small a pilot. Don't try to ground your entire company wiki at once. Pick one high-value, low-risk area-like an internal HR FAQ-and focus on cleaning that specific data set first.

Once your data is clean, choose your stack: a vector database for storage, an embedding model to create the vectors, and a strong LLM for the final generation. Test your system with "adversarial" questions-questions you know are NOT in your data-to ensure the AI is comfortable saying "I don't know" rather than guessing.

sumraa hussain

April 23, 2026 AT 02:31man this is just wild!!!! like imagine the chaos of an AI just making up a whole refund policy lol!!!! absolute madness!!!!

Raji viji

April 23, 2026 AT 23:28Finally someone mentions that your data is probably a dumpster fire. Most of you clowns think you can just plug a messy PDF into a vector DB and suddenly it's magic. Newsflash: garbage in, garbage out. If your internal docs are written by interns who can't spell, your RAG system is just going to be a faster way to hallucinate nonsense. Also, hybrid search isn't just a 'tip', it's basically mandatory if you don't want your AI confusing a corporate 'Apple' with a Granny Smith. Absolute basic stuff here but I guess some people need the hand-holding.

Rubina Jadhav

April 25, 2026 AT 12:44This is very helpful. It makes the tech easy to understand.

Rajashree Iyer

April 27, 2026 AT 06:24There is something profoundly poetic about the struggle between an AI's dream of a fictional reality and the cold, hard anchor of a database. We are essentially building digital cages for the imagination to prevent it from lying to us. It is a tragic mirror of human nature, where we crave the truth but are constantly seduced by the most confident voice in the room. The descent from 27% to 3% isn't just a statistic; it is a reclamation of reality in a world sliding toward synthetic delusion. We are not just coding a pipeline; we are architecting the very boundaries of truth for the next era of consciousness!

Jitendra Singh

April 27, 2026 AT 07:22I think we can all agree that the focus on data cleaning is the most important part here. It keeps things stable for everyone involved.

anoushka singh

April 28, 2026 AT 02:02Wait, so if I just use a vector DB I don't have to spend months retraining? That sounds like a dream for us lazy folks! I bet most companies just ignore the security leakage part until something actually breaks lol.

Parth Haz

April 30, 2026 AT 00:33It is truly encouraging to see such a clear roadmap for implementing RAG. The distinction between fine-tuning and grounding is particularly valuable for those of us looking to maintain high standards of corporate integrity. I believe focusing on a small pilot, as suggested, is the most professional approach to ensure a successful rollout across the organization. This will undoubtedly lead to more reliable AI interactions.