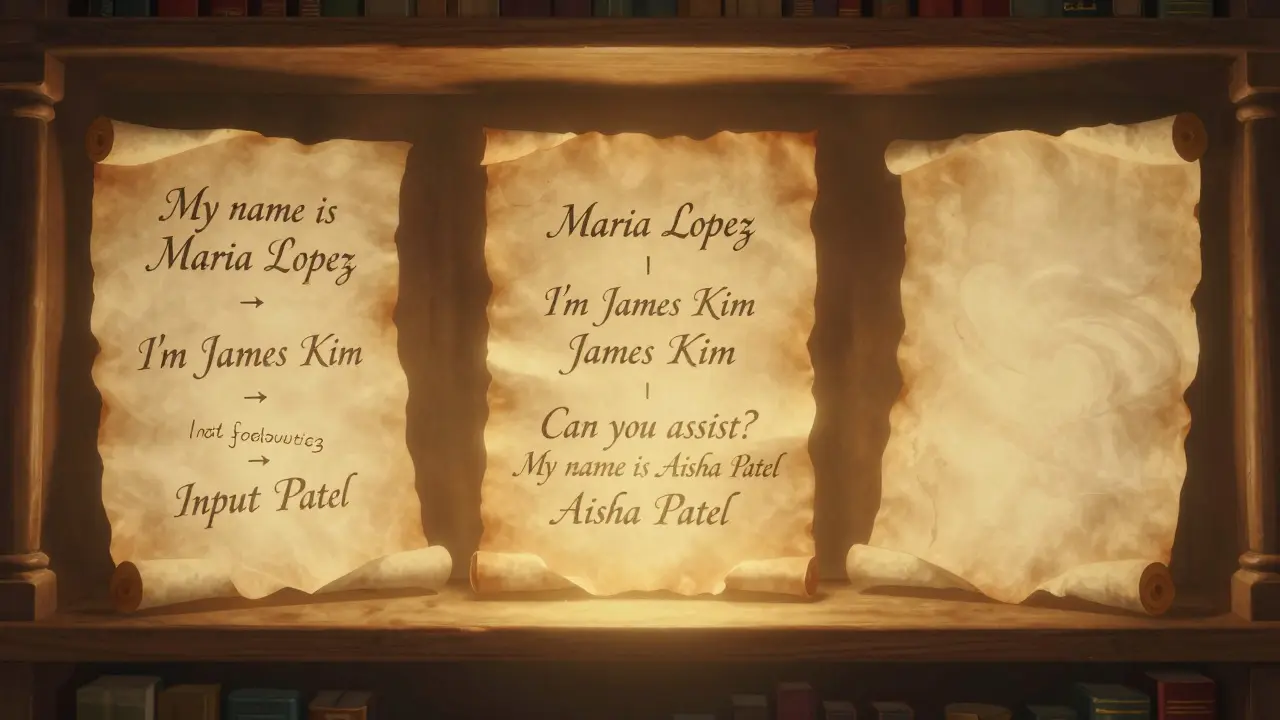

Tag: chain-of-thought

Few-Shot Prompting Strategies That Boost LLM Accuracy and Consistency

Few-shot prompting boosts LLM accuracy by 15-40% using just 2-8 examples. Learn how to choose the right examples, avoid over-prompting, and combine it with chain-of-thought for better results - without fine-tuning.