Have you ever wondered how companies run massive AI models without burning through billions in electricity costs? The secret isn't just bigger chips; it's smarter architecture. Enter Sparse Mixture-of-Experts (Sparse MoE), the architectural breakthrough that lets generative AI scale efficiently by activating only a fraction of its parameters for any given task.

In 2026, we’ve moved past the era where "bigger is always better" meant throwing more compute at dense transformer models. Instead, the industry has pivoted toward conditional computation. This approach allows models with hundreds of billions of parameters to operate at the speed and cost of much smaller ones. If you’re building or deploying large language models (LLMs), understanding this shift is no longer optional-it’s essential for staying competitive.

What Is Sparse Mixture-of-Experts?

To grasp why Sparse Mixture-of-Experts is a machine learning architecture that divides an AI model into specialized subnetworks, imagine a hospital. In a traditional "dense" model, every doctor in the hospital tries to treat every patient simultaneously. It’s chaotic, expensive, and inefficient. In a Sparse MoE system, there’s a triage nurse-the gating network-who directs each patient to only one or two specialists who are best suited for their specific condition.

This concept was formalized in Shazeer et al.’s seminal 2017 paper, "Outrageously Large Neural Networks." But it wasn’t until recent years that hardware and software caught up. Today, frameworks like Mistral AI’s Mixtral 8x7B exemplify this. Despite having 46.7 billion total parameters, Mixtral activates only 12.9 billion per token. That’s less than 30% of its brain working at any given moment, yet it delivers performance rivaling dense models twice its active size.

- Expert Networks: Specialized feed-forward neural networks trained on specific data subsets.

- Gating Network: A routing mechanism that decides which experts handle each input token.

- Combination Function: Merges the outputs from the selected experts into a final response.

Why Sparse MoE Changes the Game for Scaling

The primary benefit of Sparse MoE is decoupling model capacity from inference cost. Traditionally, if you wanted a smarter model, you had to accept slower speeds and higher latency. With MoE, you get both.

Consider the math. NVIDIA’s analysis shows that sparse MoE models can reduce computational requirements by 60-80% compared to dense models of equivalent parameter count. This efficiency comes from the "noisy top-k gating" mechanism. For every word (token) processed, the gate adds Gaussian noise to probability scores and selects the top-k experts (usually k=2). This randomness prevents the model from becoming too reliant on a single expert, promoting diversity and robustness.

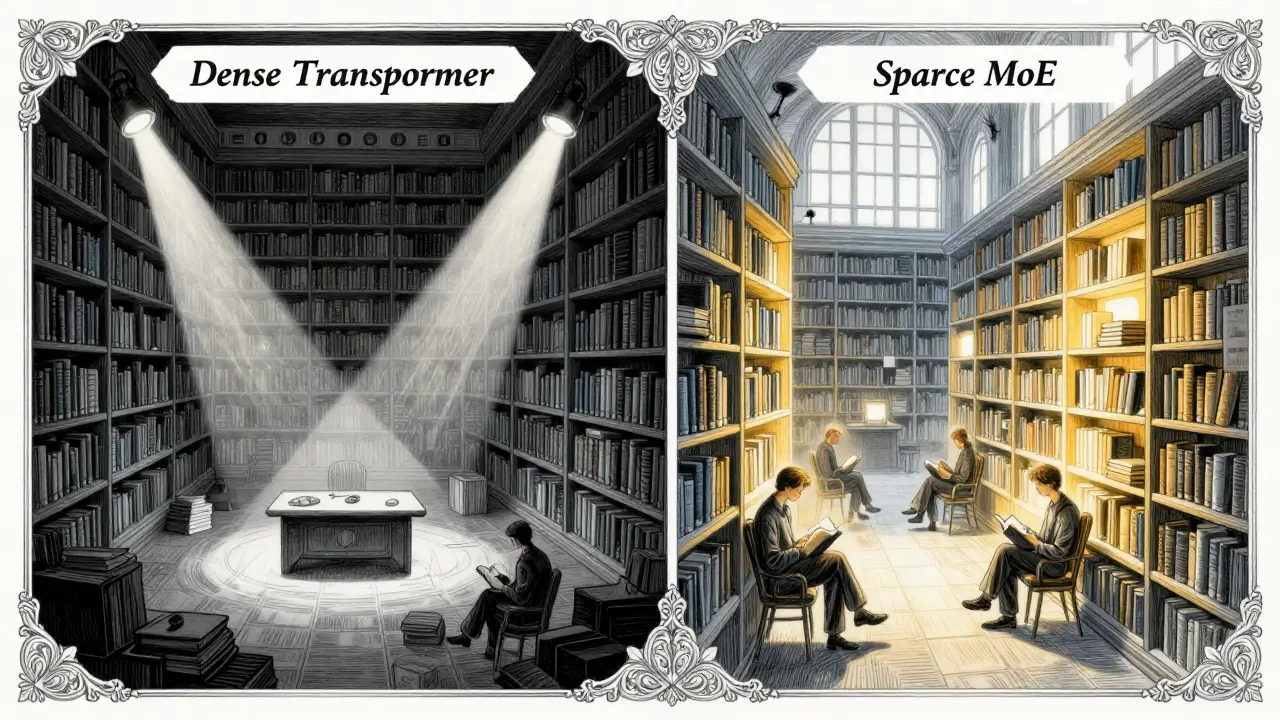

| Feature | Dense Transformer | Sparse MoE |

|---|---|---|

| Parameter Activation | All parameters active | Only top-k experts active (e.g., 2 out of 8) |

| Inference Speed | Slower as model grows | Faster relative to total parameter size |

| Training Complexity | Standard backpropagation | Requires load balancing loss |

| Hardware Utilization | High GPU tensor core usage | High memory bandwidth demand |

| Cost Efficiency | Low for small models | High for large-scale deployments |

Real-World Performance: Mixtral and Beyond

You might ask, "Does this actually work in practice?" The answer is a resounding yes. Mistral AI’s Mixtral 8x7B, released in late 2023, became an instant hit among developers. On standard NLP benchmarks, it matched Meta’s Llama2-70B-a model with over five times the active parameters-while using only 28% of the computational resources during inference.

Google’s LIMoE took this further by applying MoE to multimodal tasks. Announced in March 2023, LIMoE processes both images and text using a single sparse architecture. It achieved 88.9% accuracy on ImageNet while adding only 25% extra computation compared to single-modality models. This proves that MoE isn’t just about text; it’s a versatile framework for all types of data.

Even OpenAI’s GPT-4 and Google’s Gemini series reportedly utilize MoE techniques under the hood. By 2024, IDC reported that 42% of enterprise LLM deployments exceeding 10 billion parameters used MoE architectures. This trend is accelerating, with Gartner forecasting that 75% of such deployments will use MoE by 2026.

The Hidden Challenges: Training and Hardware

If Sparse MoE were perfect, everyone would use it immediately. But there are significant hurdles. The biggest issue is expert collapse. During training, some experts become "favorites," handling most tokens, while others remain idle. This imbalance reduces the model’s overall capability.

To fight this, engineers use load balancing loss. This penalty function forces the gating network to distribute traffic evenly. However, tuning this requires expertise. As noted in community discussions on Reddit’s r/MachineLearning, users often struggle with routing instability during early training phases. One developer reported that two experts handled 90% of tokens initially, requiring careful adjustment of regularization coefficients.

Hardware optimization is another pain point. GPUs are designed for dense matrix operations. Sparse computation patterns don’t align perfectly with GPU tensor cores, leading to inefficiencies. Dr. Tim Dettmers from the University of Washington warned that increased memory bandwidth requirements can negate computational savings on current hardware. You need high-bandwidth memory (HBM) to keep the experts fed. NVIDIA recommends A100 or H100 GPUs for training due to their 2TB/s memory bandwidth, whereas consumer cards like the RTX 4090 struggle with the memory overhead unless quantization techniques are applied.

Implementation Guide: Getting Started with MoE

Ready to try Sparse MoE? Here’s a practical roadmap for developers looking to integrate these architectures in 2026.

- Choose Your Framework: Hugging Face Transformers library offers robust support for MoE models. Look for pre-trained checkpoints like Mixtral 8x7B or Mistral’s newer Mixtral 8x22B.

- Configure Gating Parameters: Pay attention to the temperature parameter (τ). Smaller values (0.1-0.5) make the gate sharper, selecting fewer experts. Larger values (1.0-2.0) allow broader participation. Start with τ=1.0 for balanced exploration.

- Monitor Load Balance: Track the utilization rate of each expert during training. If variance exceeds 20%, increase the load balancing loss coefficient.

- Optimize for Inference: Use 4-bit quantization (e.g., GGUF format) to run large MoE models on consumer hardware. Mixtral 8x7B can achieve 18 tokens/second on a single RTX 4090 with proper quantization.

- Leverage Hybrid Architectures: Consider hybrid models that combine sparse and dense layers. Recent surveys show 37% of new MoE implementations use this approach to balance stability and efficiency.

Future Trends: What’s Next for MoE?

The evolution of Sparse MoE is far from over. Three trends are shaping the next generation of efficient AI:

1. Dynamic Expert Creation: Google’s Pathways MoE, announced in March 2025, moves beyond fixed expert sets. It dynamically creates new experts during training based on emerging data patterns. This adaptability could solve the static nature of current MoE designs.

2. Cross-Layer Expert Sharing: To reduce parameter bloat, researchers are reusing the same expert networks across multiple transformer layers. This technique cuts parameter counts by 15-22% without sacrificing performance, making models even more lightweight.

3. Hardware-Aware Routing: Future systems will optimize expert selection based on real-time GPU memory availability. Instead of purely semantic routing, the gate will consider computational load, ensuring smoother inference on constrained devices.

However, caution is warranted. Stanford HAI researchers warn that computational savings diminish as models scale beyond 1 trillion parameters due to communication overhead between experts. We may need new architectural innovations to maintain efficiency at extreme scales.

Conclusion: The Smart Way to Scale

Sparse Mixture-of-Experts isn’t just a niche technique; it’s the foundation of sustainable AI growth. By allowing models to be vast in knowledge but lean in execution, MoE bridges the gap between ambition and affordability. Whether you’re a startup optimizing costs or an enterprise deploying fraud detection systems, embracing MoE means you’re future-proofing your AI infrastructure. The question isn’t whether to adopt it, but how quickly you can master its nuances.

What is the main advantage of Sparse MoE over dense models?

The main advantage is computational efficiency. Sparse MoE models activate only a subset of parameters (experts) for each input, reducing inference costs by 60-80% compared to dense models of similar total parameter size, while maintaining high performance.

Which companies are leading in MoE implementation?

Mistral AI leads in open-source MoE models with its Mixtral series. Google dominates research with architectures like LIMoE and Pathways MoE. NVIDIA provides critical infrastructure support through optimized libraries like cuBLAS.

Can I run MoE models on my personal computer?

Yes, but with limitations. Using 4-bit quantization, models like Mixtral 8x7B can run on consumer GPUs like the RTX 4090, achieving speeds around 18 tokens per second. Training, however, requires high-end data center GPUs like NVIDIA A100 or H100.

What is expert collapse, and how do I prevent it?

Expert collapse occurs when certain experts are overused while others remain idle. Prevent it by implementing load balancing loss during training, which penalizes uneven distribution of tokens across experts.

Is MoE suitable for multimodal AI tasks?

Absolutely. Google’s LIMoE demonstrates that MoE architectures can effectively process both text and images within a single model, offering versatility and efficiency gains in multimodal applications.