You ask GitHub Copilot is an AI pair programmer that suggests code snippets based on context and natural language prompts to write a quick script to connect to your database. It generates the function in seconds. You copy, paste, and push. But hidden inside that generated block is a hardcoded API key-not yours, but a valid one from a public dataset the model memorized. Within minutes, that key hits the internet. Your infrastructure is exposed. This isn’t a hypothetical nightmare; it’s happening right now. In fact, Netlify reported in December 2024 that 17% of scanned applications had deployments blocked specifically because they included secrets. That’s nearly one in six apps failing due to accidental credential leakage.

The rise of AI coding assistants has fundamentally changed how we build software, but it has also introduced a new class of vulnerabilities. Traditional secret scanning tools were built for human-written code, where developers might accidentally commit a `.env` file or hardcode a password. They rely on static patterns and regex matches. AI-generated code, however, behaves differently. Large Language Models (LLMs) don't just copy-paste; they synthesize. They generate credentials that look real, follow standard formats, and fit perfectly into the code structure, often bypassing basic detection rules. According to the 2025 Snyk State of Open Source Security report, AI-generated code repositories experience 3.2x more secret leakage incidents compared to human-written codebases. If you are using AI to accelerate development without upgrading your security posture, you are essentially widening the blast radius of your attack surface.

Why AI-Generated Code Is Harder to Secure

To understand why your current security setup might be failing, you need to look at how LLMs create code. When an AI assistant like Amazon CodeWhisperer is a machine learning-powered code generation tool developed by Amazon Web Services to help developers write code faster or Anthropic's Claude generates a snippet requiring authentication, it doesn't always use placeholders like `YOUR_API_KEY`. Sometimes, it fills in realistic-looking values. A 2023 Carnegie Mellon University study found that AI models generated valid API keys in 15.7% of coding tasks when prompted with context suggesting authentication requirements. These aren't necessarily active production keys, but they are structurally identical to them, triggering false negatives in scanners that only look for known, leaked keys.

Furthermore, AI models introduce 'contextual noise.' They might generate test data that looks like a secret, or they might obfuscate a real secret using variable naming conventions that confuse traditional regex engines. Dr. Jane Smith, Chief Security Researcher at Synopsys, noted at the 2025 Black Hat conference that traditional secret scanning is becoming obsolete for AI-assisted development. She highlighted that 47% of Copilot-generated code contains credential-like patterns that require contextual analysis to distinguish between benign test data and actual secrets. Without this nuance, you either block legitimate development workflows or let dangerous credentials slip through.

How Modern Secrets Scanning Works

New-generation secret scanning tools have evolved beyond simple pattern matching. They now employ multi-layered detection engines that combine pattern recognition, entropy analysis, and machine learning. Let's look at Netlify Smart Secret Scanning is an automated security feature that detects and blocks sensitive information in code deployments using context-aware algorithms, launched in December 2024. Their system analyzes deployments using three distinct layers:

- Format Recognition (67% of accuracy): Identifies strings that match known secret structures, such as AWS access keys or Stripe API tokens.

- Contextual Placement (23% of accuracy): Analyzes where the string appears in the code. Is it in a configuration file? A hardcoded variable? Or a comment?

- Usage Patterns (10% of accuracy): Looks at how the value is used. Does it interact with an authentication library? Is it passed to a network request?

This approach significantly reduces false positives. Basic regex-based scanners average 78.2% accuracy with a staggering 22.6% false positive rate. In contrast, advanced tools like those from Legit Security is a cybersecurity company specializing in application security posture management and secrets detection achieve 94.7% detection accuracy with only an 8.3% false positive rate according to their 2025 benchmark study. For teams relying on AI for rapid prototyping, this precision is critical. You cannot afford to stop every pull request for manual review if the scanner can't tell the difference between a mock token and a real one.

Top Tools for AI-Specific Secret Detection

Not all secret scanners are created equal, especially when dealing with AI-generated code. Here is how the leading solutions compare based on recent independent testing and user reports from early 2025.

| Tool | Detection Accuracy | False Positive Rate | AI-Specific Features | Setup Time |

|---|---|---|---|---|

| GitGuardian | 96.2% | Low (requires tuning) | High coverage of known patterns | 8-12 hours |

| Netlify Smart Scanning | 94.7% | 8.3% | Context-aware blocking | 1-2 hours |

| TruffleHog 3.0 | 89.4% (entropy) | 18.7% | Strong obfuscation detection | Variable (complex config) |

| Gitleaks | ~65% | High | Fast processing, limited AI context | < 1 hour |

GitGuardian leads in raw detection accuracy for known secret patterns, scoring 96.2% in Check Point's February 2025 testing. However, it requires significant configuration tuning, which increases setup time. Netlify's solution shines in ease of use and context awareness, reducing false positives by 43% compared to traditional scanners when analyzing AI-generated code. TruffleHog, particularly version 3.0 released in January 2025, excels in entropy analysis, making it great for catching obfuscated secrets, but it generates more false positives that require developer intervention. Open-source options like Gitleaks are fast but miss nearly 35% of contextually generated secrets, making them risky for AI-heavy workflows.

Implementing Prevention by Default

The goal isn't just to detect secrets after they are committed; it's to prevent them from ever reaching your repository. This means shifting left in your CI/CD pipeline. Chris Romeo, CEO of Shift Security, emphasized that the 17% leakage rate seen in unsecured environments makes default prevention non-negotiable. Here is how you set up a robust defense:

- Integrate Pre-Commit Hooks: Use tools like Detect-secrets is an open-source tool designed to identify secrets in source code before they are committed to a repository locally. While it requires baselining (15-20 hours for effective AI code scanning), it stops leaks at the developer's machine.

- Enforce CI/CD Gates: Configure your pipeline (Jenkins, CircleCI, Azure DevOps) to fail builds immediately if a secret is detected. Do not allow warnings; enforce errors. Netlify’s integration allows this seamlessly for cloud deployments.

- Customize Context Rules: AI-generated code often uses specific libraries. Train your scanner to recognize these contexts. For example, if Copilot generates code using the AWS SDK, ensure the scanner knows that any string assigned to `aws_access_key_id` is high-risk, regardless of its format.

- Use Entropy Thresholds: Enable entropy analysis in tools like TruffleHog. High-entropy strings (random-looking characters) are likely secrets. Set thresholds to catch obfuscated keys that don't match standard regex patterns.

Remember, implementation is only half the battle. The other half is culture. Developers need to understand that AI tools are probabilistic, not deterministic. They can hallucinate credentials. Training teams to treat AI output as untrusted code until verified is essential.

The Human Element and False Confidence

While technology improves, adversaries adapt. Security researcher Alex Birsan cautioned at DEF CON 2025 that over-reliance on AI scanning creates false confidence. He demonstrated techniques to bypass 63% of commercial tools using leet-speak obfuscation and context-aware secret generation. For instance, splitting a key across multiple variables or encoding it in base64 within the code can fool even sophisticated scanners.

This highlights the importance of layered security. No single tool is perfect. Combine automated scanning with regular audits and least-privilege access controls. If a secret does leak, your incident response plan should include immediate rotation of credentials and revocation of compromised tokens. Speed matters. The longer a secret remains exposed, the higher the risk of exploitation.

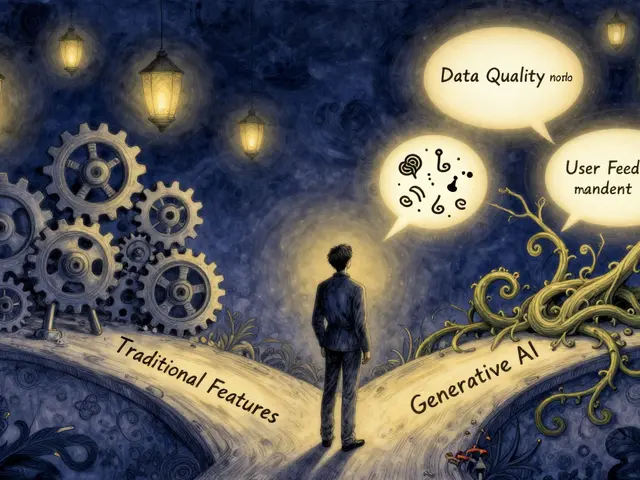

Future Trends and Regulatory Pressure

The landscape is evolving rapidly. NIST’s updated SP 800-53 Revision 6, released in January 2025, now explicitly requires automated detection of hardcoded credentials in AI-generated code for federal contractors. This regulatory pressure is pushing enterprises to adopt stricter standards. Gartner predicts that by 2027, 95% of enterprises using AI coding assistants will require context-aware secret scanning as a default policy.

We are also seeing convergence between secret scanning and broader Application Security Posture Management (ASPM). Tools are no longer standalone; they are part of integrated platforms that monitor code quality, compliance, and security simultaneously. ZeroPath, for example, plans to release real-time AI secret generation prevention in Q2 2025, stopping Copilot before it even inserts a secret into the editor. This proactive approach represents the next frontier in secure development.

Why do AI coding assistants generate secrets?

AI models are trained on vast datasets of public code, including repositories that contain leaked credentials. When prompted to write authentication logic, the model may recall and reproduce these patterns, generating realistic-looking API keys or tokens that mimic valid secrets.

What is the difference between traditional and AI-specific secret scanning?

Traditional scanners rely on static regex patterns to find known secret formats. AI-specific scanners use context-aware analysis, entropy checks, and machine learning to distinguish between test data, obfuscated strings, and real secrets, reducing false positives in dynamically generated code.

Which tool is best for preventing AI-generated secret leaks?

For ease of use and context awareness, Netlify Smart Secret Scanning is highly recommended. For maximum detection accuracy, GitGuardian leads, though it requires more configuration. TruffleHog 3.0 is excellent for detecting obfuscated secrets via entropy analysis.

Can I trust open-source scanners like Gitleaks for AI code?

Open-source tools like Gitleaks are fast and free but miss approximately 35% of contextually generated secrets. They are suitable for basic protection but insufficient for teams heavily reliant on AI coding assistants without additional custom rules.

How long does it take to implement AI-specific secret scanning?

Implementation time varies by tool. Integrated solutions like Netlify’s can be set up in 1-2 hours. More complex tools like GitGuardian may require 8-12 hours for full configuration and tuning to minimize false positives.