| Component | Primary Goal | Key Example |

|---|---|---|

| Preprocessors | Clean and compress raw modality-specific data | Wavelet downsampling for video |

| Orchestrator | Sync heterogeneous data streams and manage flow | NVIDIA NeMo / CrewAI |

| Postprocessors | Fuse different modalities into a single output | Late fusion for customer service bots |

The Heavy Lifting: Preprocessors and Data Ingestion

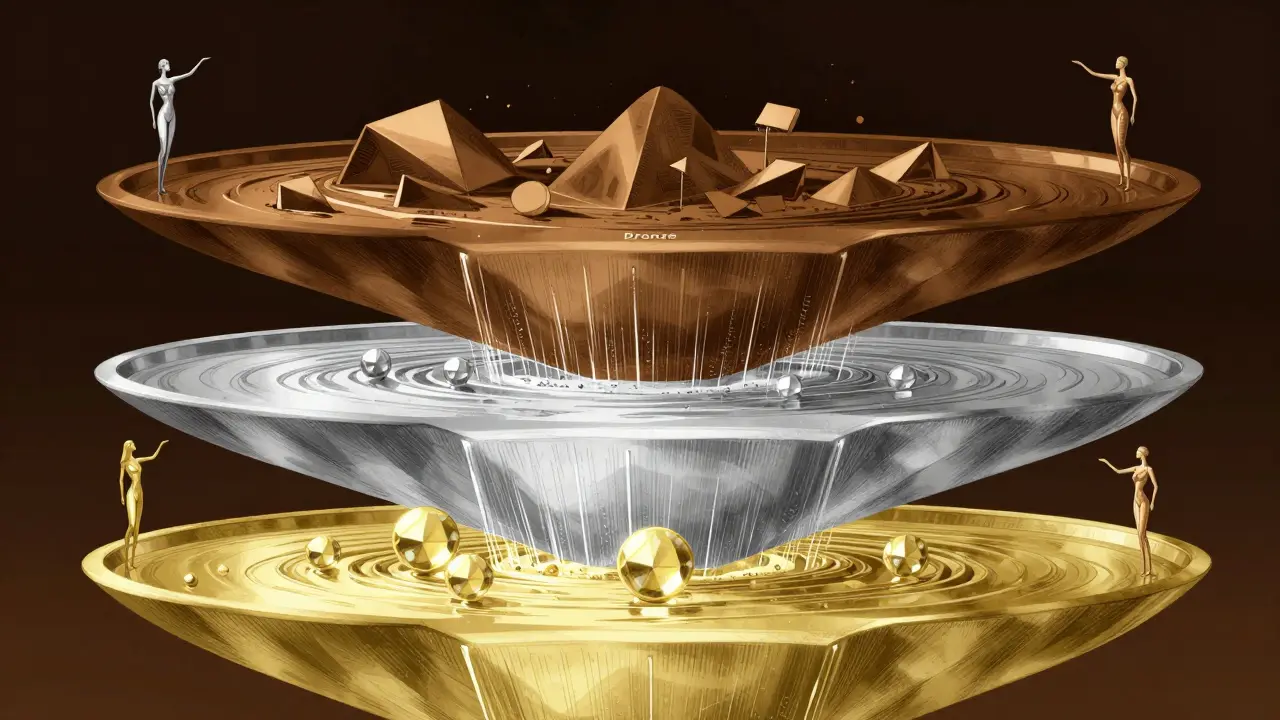

Before a model like GPT-4o can make sense of a prompt containing both a PDF and a voice memo, the data has to be stripped of noise and standardized. This is where preprocessors come in. They act as the first filter, ensuring the model doesn't waste computational power on irrelevant pixels or silent audio gaps. One of the biggest breakthroughs here is NVIDIA NeMo and its use of 3D wavelet downsampling. Instead of just shrinking an image, this technique compresses visual data by about 4.7x while keeping the important details intact. This means the system can ingest massive video files without crashing the VRAM of your GPUs. In high-stakes environments like healthcare, the preprocessing is even more structured. Microsoft uses a "medallion lakehouse" architecture. Think of it as a water filtration system:- Bronze Layer: Raw, messy data is dumped here exactly as it arrived.

- Silver Layer: Preprocessors clean the data and align schemas so a heart rate monitor's data looks consistent with a clinician's notes.

- Gold Layer: The data is transformed into feature stores, which are essentially high-speed shortcuts for the AI to access the most important information.

Orchestrating the Chaos: Managing the Flow

Orchestration is the "brain" that decides when a preprocessor should run and where the resulting data should go. It's not just about moving files; it's about solving the "modality impedance mismatch." This is a fancy way of saying that video and audio often drift apart. If your pipeline doesn't account for this, you end up with a 15-22% error rate because the AI is trying to associate a sound with a frame that happened two seconds ago. To fix this, modern orchestrators use causal structures. This forces the model to only look at past and present frames during tokenization, preventing it from "guessing" based on future data that hasn't been processed yet. When choosing an orchestration tool, the trade-off usually comes down to scale versus flexibility. If you need raw power for video, NVIDIA NeMo is the industry leader, often processing video 7x faster than its competitors. If you're building a complex swarm of specialized AI agents, CrewAI is a popular open-source choice. However, be warned: open-source tools often lack the enterprise-grade security found in proprietary systems, scoring lower on readiness scales for large corporations.

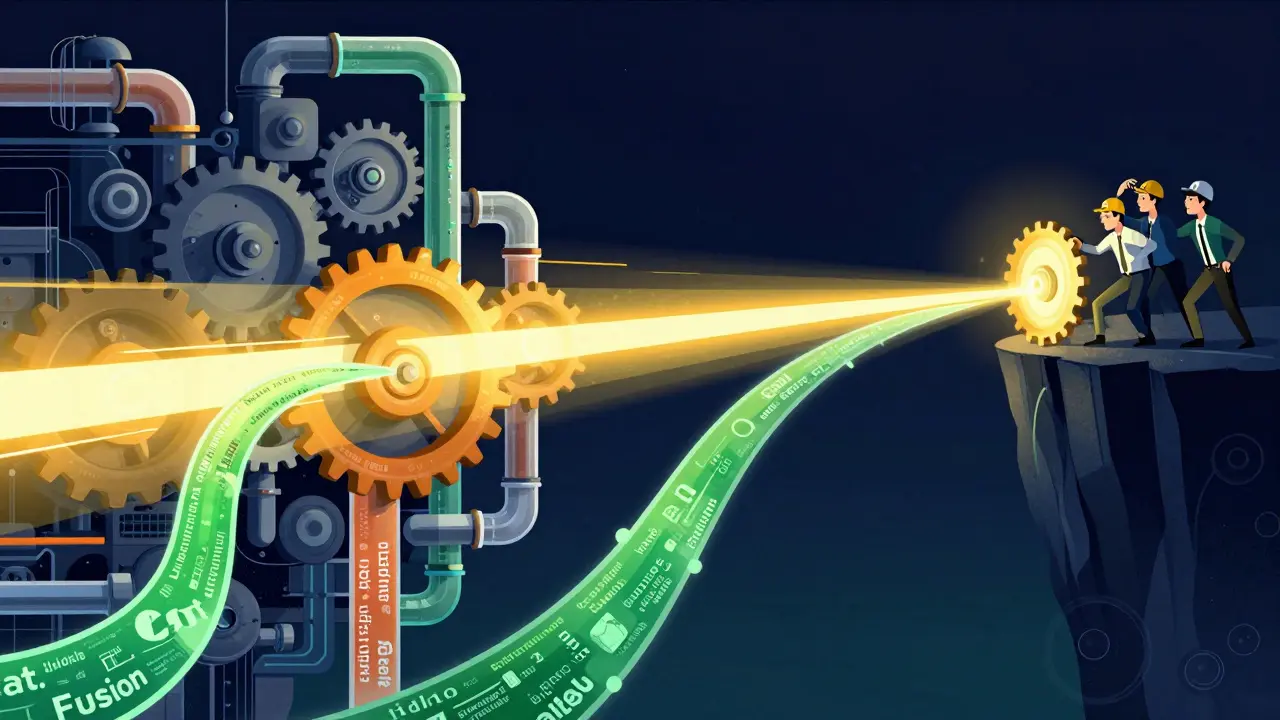

The Final Blend: Postprocessors and Fusion Techniques

Once the data is preprocessed and orchestrated, the AI generates a response. But the magic happens in the postprocessing stage, specifically through data fusion. Fusion is the process of merging different data streams into one meaningful answer. There are three main ways to do this, and picking the wrong one can tank your accuracy.- Early Fusion: This happens at the very start. You combine the text and image data into a single vector before it even enters the model. This is common in vision-language tasks and has an adoption rate of around 87% because it's computationally efficient.

- Mid-Fusion: Data is processed separately for a while and then merged in the middle layers of the neural network. You'll see this most often in medical imaging where a scan and a patient's history need to be weighed equally but separately first.

- Late Fusion: The model makes a prediction for the text and a separate prediction for the image, and then a postprocessor merges those two final answers. While this requires about 41% more computing power, it's 28% more accurate for customer service applications because it prevents one modality from "overpowering" the other.

The Hardware Reality: What You Actually Need

You can't run a multimodal pipeline on a standard laptop. The data ingestion rates for real-time processing can hit 2.8TB per hour. To keep up, your infrastructure needs to be beefy. At a minimum, you're looking at NVIDIA A100 GPUs with at least 40GB of VRAM. If you're deploying at an enterprise level, 100GB of RAM is the floor, and you'll need NVMe storage capable of at least 3.5GB/s throughput. If your storage is slow, your expensive GPUs will sit idle, creating a massive waste of budget.Real-World Pitfalls and the 'Complexity Cliff'

It sounds great on paper, but the reality of managing these pipelines is grueling. There is a phenomenon called the "orchestration complexity cliff." Essentially, adding one new modality (like adding audio to a text-image pipeline) doesn't just add 20% more work-it increases the overall pipeline complexity by about 3.2x. This happens because every new data type introduces new alignment problems and new failure points. Most companies find that once they hit 5 or 6 different modalities, the system becomes almost impossible to maintain without a dedicated team of 3-5 specialized engineers. This "hidden technical debt" is why some firms are moving toward "orchestration-as-a-service," letting cloud providers handle the messy plumbing.What is the difference between early and late fusion in multimodal AI?

Early fusion merges different data types (like text and images) into a single representation at the beginning of the pipeline, making it faster and more efficient. Late fusion allows the model to process each modality independently and only merges the final results at the end. Late fusion is more computationally expensive but generally more accurate for complex tasks like customer support bots.

Why is wavelet downsampling important for video preprocessing?

Video files are massive and can easily overwhelm GPU memory (VRAM). Wavelet downsampling, used in tools like NVIDIA NeMo, compresses visual data by up to 4.7x while preserving the essential structural details. This allows the AI to process high-resolution video without requiring impossible amounts of hardware.

What is a medallion lakehouse architecture?

It is a data organization strategy used by Microsoft to manage multimodal data. It consists of three layers: Bronze (raw data ingestion), Silver (cleaned and schema-aligned data), and Gold (optimized feature stores ready for AI consumption). This structure reduces redundant API calls and ensures data compliance, especially in healthcare.

How do you solve the 'modality impedance mismatch' problem?

This problem occurs when audio and video streams fall out of sync, leading to high error rates. It is solved using causal structures in the orchestration layer, which restrict the model to using only past and present frames during tokenization, ensuring that the AI doesn't associate audio with the wrong visual frame.

Which orchestration framework is best for RAG workflows?

For Retrieval-Augmented Generation (RAG) at scale, the combination of Zilliz and Milvus is highly effective, achieving over 92% precision. They excel at handling the rapid embedding and retrieval of multimodal data, which is critical for providing accurate, context-aware AI responses.