Tag: LLM safety

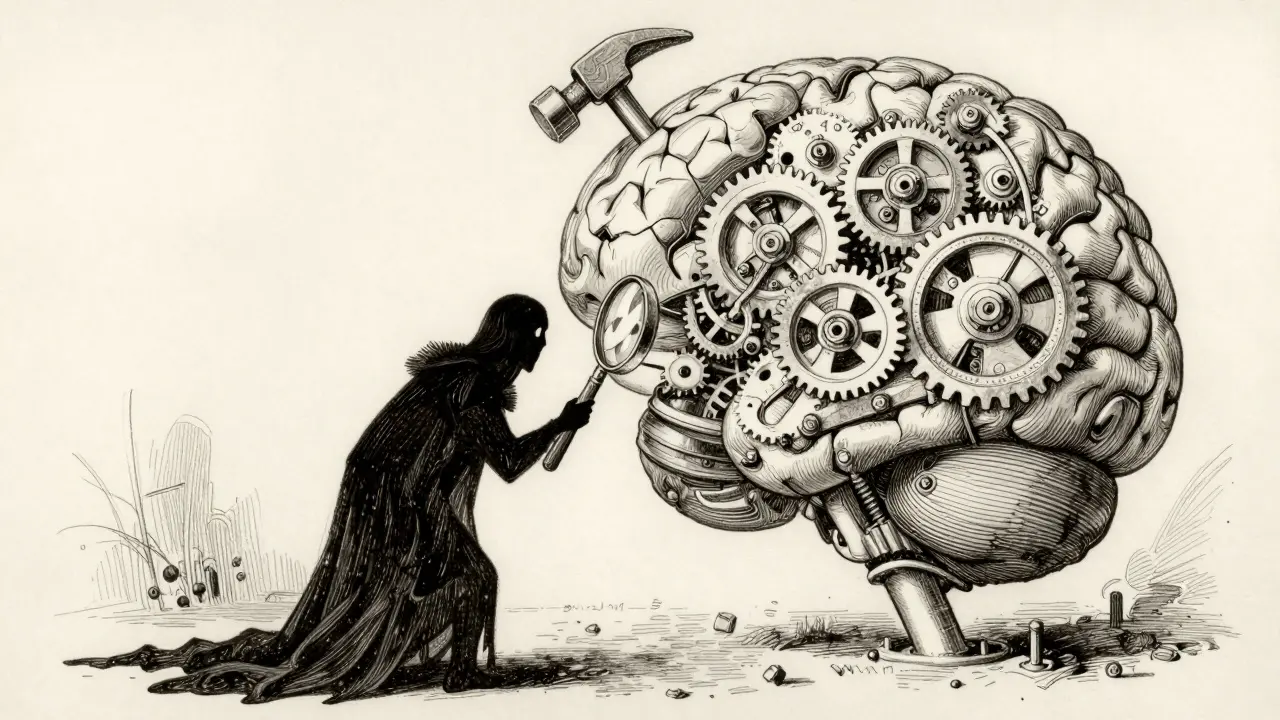

Red Teaming Prompts for Generative AI: Finding Safety and Security Gaps

Learn how to identify and fix safety gaps in generative AI using red teaming strategies. Covers prompt injection, automation tools, and regulatory compliance.