Why Base Models Fall Short

You've probably tried prompting a base model like LLaMA-3 and felt frustrated. It knows the facts, but it doesn't know your rules. That's where Supervised Fine-Tuning is the process of adjusting a pre-trained large language model using labeled input-output pairs to teach it specific behaviors. Also known as SFT, this technique sits between massive pre-training and reinforcement learning. Without it, you're stuck guessing prompts that might work today but fail tomorrow. The real value here isn't just making the chatbot sound friendly. It's about forcing the model to respect your data structure. When we look at enterprise implementations, like the case study from Walmart Labs, organizations saw a 63% reduction in response time after applying this method. But getting there requires discipline. You cannot just dump messy logs into a script and expect magic. The difference between success and failure usually comes down to three things: data quality, parameter configuration, and how you measure results.

The Three Stages of Alignment

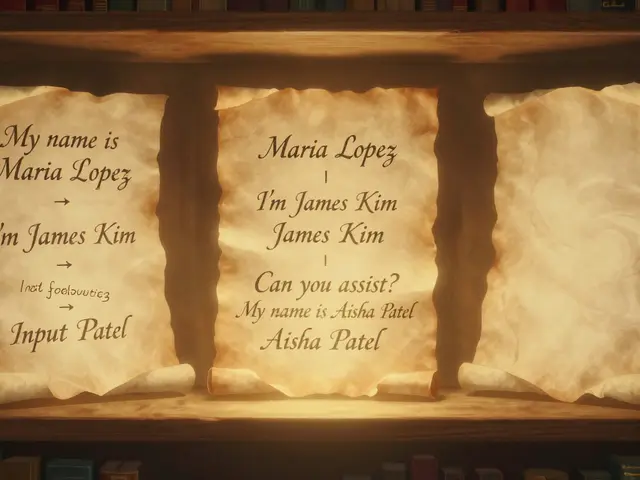

To understand where you stand, you need to see the full picture. Most people think training happens in one go, but the standard pipeline has distinct phases. First, you have pre-training, which gives the model general knowledge. Then comes our focus, supervised fine-tuning, which teaches instruction following. Finally, Reinforcement Learning from Human Feedback (RLHF) optimizes for safety and preference. Skipping SFT is like trying to build a house on sand; RLHF won't stick if the foundation isn't trained to follow instructions first. Large Language Models are neural networks capable of understanding and generating human language. Examples include Google's text-bison@002 or Meta's LLaMA series. These are the engines you tune. While some teams jump straight to full parameter updates, modern workflows prefer Parameter-Efficient Fine-Tuning (PEFT). This approach saves money and compute power. Specifically, techniques like LoRA modify only a tiny fraction of parameters-often 0.1%-instead of the whole model. You get near-identical performance without needing a farm of NVIDIA A100 GPUs.

Data Preparation: The Real Bottleneck

Here is the hard truth: spending months cleaning data beats tweaking hyperparameters for weeks. Research from Google Research shows that 1,500 expert-curated examples outperform 50,000 crowd-sourced ones. Quantity creates noise; quality creates intelligence. You need to curate datasets that reflect exactly how you want the model to behave. If you are building a medical QA bot, your examples shouldn't be generic chat logs. They must be physician-verified Q&A pairs.

| Sets | Percentage | Purpose |

|---|---|---|

| Training Set | 70-80% | Used to update model weights |

| Validation Set | 10-15% | Monitors overfitting during training |

| Test Set | 10-15% | Final unbiased performance check |

Tools and Infrastructure Setup

You don't need to write a training loop from scratch unless you want a headache. The ecosystem has matured significantly.

Hugging Face Transformers is

an open-source library for natural language processing tasks.

It includes TRL (Transformer Reinforcement Learning) which provides the SFTTrainer class. This tool handles the heavy lifting of batching, gradient accumulation, and logging.

For hardware, you have choices depending on budget. Cloud platforms like

Vertex AI is

Google's machine learning platform offering managed services. offer managed instances where a single training job might cost $30 an hour. If you prefer self-hosted, you can run LLaMA-2 7B on 8 A100 GPUs locally. However, memory management is critical. Using 4-bit quantization via the bitsandbytes library drops VRAM requirements from 14GB down to 6GB for a 7B model. This allows you to fine-tune on consumer-grade hardware if you keep batch sizes small.

Hyperparameters That Matter

Tweaking numbers randomly is a waste of electricity. Start with proven baselines. Learning rate is the most sensitive setting. Pre-training uses high rates, but fine-tuning needs gentle adjustments. A range of 2e-5 to 5e-5 is standard. Pushing it higher, say above 3e-5, often causes the model to lose its base capabilities.

Epochs are another common mistake area. Training for 100 epochs sounds thorough, but you'll overfit quickly. For SFT, 1 to 3 epochs is typically sufficient. If your validation loss stops dropping after epoch 2, stop training. Batch size depends on your GPU RAM, but 4 to 32 is the sweet spot. Gradient accumulation helps simulate larger batches without needing more VRAM. You also need to configure your tokenizer correctly. If you are using a decoder-only model, set padding to the left side. Otherwise, attention masks break, and the model gets confused by token positions.

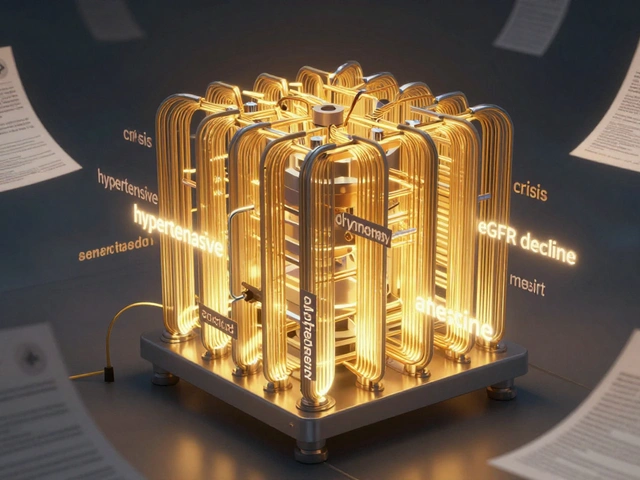

Evaluating Success Beyond Accuracy

Accuracy alone tells you half the story. You need to measure perplexity, which gauges how predictable the model is given a context. A target below 15 for conversational tasks indicates a fluent model. However, numbers don't catch hallucinations. A financial advice model could be 90% accurate mathematically but still give dangerous legal warnings. This is why qualitative evaluation remains essential. The Anthropic team recommends human evaluation for coherence and safety. You might automate the testing with scripts, but you need a human review cycle. Look for specific signs of regression. If the model used to say "Hello" nicely and now says "HELLO" aggressively after tuning, you've broken alignment. JPMorgan Chase reported 28% hallucination rates even after extensive SFT, requiring additional guardrails. Don't trust the loss curve implicitly.

Avoiding Common Pitfalls

We've all been burned before. One frequent issue is template inconsistency. If your dataset mixes JSON instructions with plain text prompts, the model gets confused. Cameron Wolfe from Hugging Face notes that varying instruction formats creates confusion requiring 30% more data to fix. Stick to one format throughout your entire training set. Another pitfall is skipping the validation step. Teams often skip setting aside a hold-out test set because they are eager to deploy. This leads to deploying models that perform well on their own dev machines but crash in production when faced with unseen data patterns. Always verify on a held-out set that mimics real-world usage.

Looking Ahead: Automation and Standards

The landscape is shifting rapidly towards automation. Google Cloud introduced automated data quality scoring in 2024, rejecting low-quality examples automatically. This reduces manual curation time significantly. By 2026, we expect 80% of implementations to use AI-assisted data curation. The industry is moving away from purely manual labeling toward synthetic data generation. Anthropic plans to incorporate synthetic data to overcome dataset limitations. However, this brings new challenges regarding data provenance. The EU AI Act requires demonstrable oversight of all training data. Keep logs of where every example comes from to stay compliant.

Do I need a large dataset to start fine-tuning?

No, quality matters more than volume. Studies show 1,500 expert examples often beat 50,000 noisy ones. Start with 500 high-quality pairs to establish a baseline before scaling up.

Is LoRA better than full fine-tuning?

Yes, for most use cases. LoRA achieves 95-98% of full fine-tuning performance while reducing memory usage from 14GB to 0.5GB for smaller models, making it much cheaper to run.

How long does a typical SFT job take?

On cloud infrastructure like Vertex AI, a job might take 3-4 hours. Locally with 8x A100s, a 7B model tuning on a medium dataset takes roughly 24-48 hours.

Can SFT fix bad reasoning skills?

Not effectively. SFT excels at factual recall and instruction following. DeepMind found it improves question answering by 72% but multi-step reasoning by only 38%.

What happens if my model forgets general knowledge?

This is called catastrophic forgetting. To prevent it, keep your learning rate low (around 2e-5) and limit training to 1-3 epochs. Mixing general conversation data into your fine-tuning set can also help retain basics.

Lauren Saunders

March 27, 2026 AT 15:50It is often superficially presented that fine-tuning requires merely labeled data. The pedagogical nuance is frequently lost to practitioners seeking quick results. Many organizations prioritize quantity over the curation process entirely. This creates a fragile foundation for any production deployment. Furthermore, the discussion regarding catastrophic forgetting is woefully understated in current literature. Without rigorous validation protocols, one risks degrading general capabilities significantly. We observe that models lose baseline knowledge surprisingly easily during optimization cycles. Consequently, the proposed guidelines require significantly more elaboration on testing procedures. Data splitting is indeed crucial, yet insufficient without proper metric tracking. Perplexity scores alone fail to capture semantic degradation patterns effectively in high-stakes environments. One must consider the ethical implications of proprietary model ownership as well. Current methodologies favor speed at the cost of safety verification steps. It remains essential to maintain human oversight throughout the reinforcement phases. Practitioners who ignore these structural weaknesses will face inevitable failure down the road. Ultimately, the playbook lacks depth regarding long-term maintenance strategies.

sonny dirgantara

March 28, 2026 AT 16:05thats actually really useful info for me today thx

Kendall Storey

March 29, 2026 AT 04:23LoRA implementation details are critical here regarding memory efficiency. When utilizing parameter-efficient methods you reduce overhead drastically compared to full weight updates. Standard configurations often overlook quantization benefits before even starting the job. Alpha values should be tuned specifically for your task domain rather than defaults. Gradient accumulation allows for larger effective batch sizes without VRAM overflow issues.

Pamela Tanner

March 29, 2026 AT 09:07The distinction between configuration parameters and hyperparameters warrants clarity in implementation guides. Your explanation regarding gradient accumulation is technically accurate for modern architectures. Precision loss can occur if mixed precision training is not configured correctly during initialization. Ensuring consistent tokenization across training and inference stages prevents subtle alignment drift.

Andrew Nashaat

March 29, 2026 AT 09:41Data privacy matters!!! You cannot ignore the legal framework surrounding training datasets.. The industry moves too fast without safeguards!! Ethics must come first.. Compliance is non-negotiable..... Using private information violates fundamental rights!! Guardrails are essential...

Nathan Jimerson

March 30, 2026 AT 08:25We can achieve both performance and compliance simultaneously through careful dataset selection. Synthetic data generation offers a viable path forward while mitigating privacy concerns. Maintaining transparent logs ensures accountability at every stage of development.

Janiss McCamish

April 1, 2026 AT 06:13Learning rate scheduling needs adjustment for smaller datasets specifically. Warmup steps prevent early instability during initial epochs. Validation checks should trigger early stopping when metrics plateau.

Ashton Strong

April 1, 2026 AT 09:34I would like to respectfully suggest that early stopping criteria benefit from monitoring multiple metrics simultaneously. A single loss value may not capture model behavior comprehensively across different contexts.

Richard H

April 1, 2026 AT 11:33American technology leads the world in this sector. Other nations struggle to match our compute infrastructure. Domestic investment protects national security interests in AI deployment.

Steven Hanton

April 3, 2026 AT 01:00Global collaboration enhances innovation regardless of geographical boundaries. Sharing best practices improves safety standards across all regions involved.