Generative AI doesn’t work because it’s smart. It works because of the data you feed it. Skip the data strategy, and your chatbot will give customers wrong account numbers. Your sales tool will predict demand based on outdated inventory logs. Your internal knowledge assistant will cite documents that don’t exist. The truth is simple: generative AI is only as good as the data behind it.

Why Your Old Data Systems Won’t Cut It

Most companies built their data systems for transactional tasks-billing, payroll, inventory tracking. These systems are great at handling structured numbers and fixed fields. But generative AI? It needs messy, real-time, unstructured data: customer service transcripts, internal wikis, product manuals, email threads, support tickets. It needs context. It needs connections between ideas, not just columns in a spreadsheet. Traditional data lakes? They’re full of stale, siloed files. You can’t train a model on that and expect accurate answers. A 2025 MIT study found that 95% of generative AI pilot projects failed because their data was a mess. The 5% that succeeded? They didn’t just collect more data. They rebuilt how they treated it.The Three Pillars of a Working Data Strategy

Forget vague goals like "improve data quality." A real strategy has three non-negotiable pillars: quality, access, and security. Get all three right, and your AI stops hallucinating and starts delivering value.1. Data Quality: 98% Accuracy Isn’t Optional

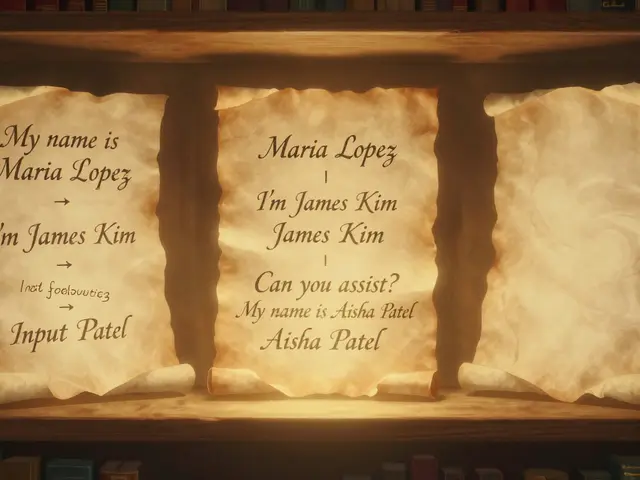

Generative AI doesn’t know what’s true. It only knows patterns. If your training data has duplicate entries, broken links, outdated prices, or typos in product descriptions, your AI will learn those mistakes and repeat them. That’s not a bug-it’s a feature of bad data. Successful teams treat data like a product. They don’t just dump files into a bucket. They clean, validate, and label every piece of content before it touches the model. One retailer automated validation checks across 100% of its training data. Result? A 58% drop in model errors, according to MIT’s 2025 findings. You need automated checks for:- Duplicates (same document, 3 different versions)

- Outdated information (last updated 2021? Time to retire it)

- Missing context (a product review without the product name)

- Formatting chaos (PDFs with scanned text, corrupted CSVs)

2. Access: Unified, Contextual, and Fast

You can’t build a smart assistant if your data lives in 12 different systems. HR data in Workday. Sales data in Salesforce. Support logs in Zendesk. Internal docs in Notion. Your AI needs to pull from all of them-in real time. That’s where vector databases come in. Tools like Pinecone or Weaviate store data as numerical embeddings-mathematical representations of meaning. Instead of searching for keywords, your AI searches for context. If someone asks, "What’s the return policy for defective headphones?", it doesn’t look for the phrase "return policy." It finds documents about customer complaints, warranty terms, and support replies that match the intent. High-performing systems handle over 10,000 queries per second with under 500ms latency. That’s not a suggestion. It’s a requirement if you want real-time customer service or dynamic sales forecasting. The best implementations use Retrieval-Augmented Generation (RAG). It’s not magic. It’s simple: your AI asks for the most relevant data before answering. Then it answers based on that data. No guessing. No made-up facts. Just facts pulled from your trusted sources.3. Security: Who Saw What, and When?

If your AI has access to customer PII, salary data, or proprietary R&D notes, you’re not just running a tech experiment-you’re managing a legal risk. Every piece of data used to train or answer a query must be tracked. Who accessed it? When? Was it anonymized? Was it shared outside the company? Audit trails aren’t optional. They’re your shield. GDPR, CCPA, and new AI regulations in the EU and U.S. demand this. In 2025, 73% of enterprises cited compliance as a top driver for better data governance. One company skipped this step and saw 3.2 times more violations than peers with strong controls. You need:- Role-based access: Sales team sees customer history. Legal team sees contracts. HR sees compensation data.

- Data masking: Replace names, SSNs, and account numbers with placeholders in training sets.

- Retention policies: Delete training data after model deployment unless legally required.

- Encryption: At rest, in transit, and during processing.

What Happens When You Skip the Strategy?

One company built a GenAI chatbot for customer support using their CRM data-without cleaning it. The CRM had 32% duplicate records. Some customers had three different addresses. Others had phone numbers from 2018. The AI gave out wrong account info 32% of the time. Customers got billed twice. Refunds were denied because the system thought they’d already been paid. The company lost $2.3 million in revenue before shutting it down. That’s not an outlier. It’s the norm. Organizations without a strategy get:- 65-80% accuracy on domain tasks (with RAG and clean data)

- 15-20% accuracy on domain tasks (without proprietary data integration)

Where It Works Best

Not every use case needs a full data overhaul. But these three areas deliver clear ROI when done right:- Customer service automation: One company reduced resolution time by 30% in six months by feeding their AI real-time support logs, product manuals, and past ticket resolutions.

- Internal knowledge management: Employees stopped wasting hours searching through shared drives. One firm cut document search time by 40% by using RAG to pull from their internal wiki, Slack archives, and project docs.

- Sales forecasting: A financial services firm combined historical sales, market trends, and customer behavior data into a single pipeline. Forecast accuracy jumped 15% in Q4 2025.

How Long Does It Take? What Does It Cost?

This isn’t a two-week project. BlackHills AI’s 2025 roadmap breaks it down:- Assessment: 1-3 months. Map what data you have. Identify gaps. Align stakeholders.

- Planning: 2-3 months. Pick your first use case. Define success metrics. Choose tools.

- Pilot: 3-6 months. Build the pipeline. Test with real users. Iterate.

- Scaling: 6-12 months. Roll out across departments. Train teams. Automate monitoring.

What Skills Do You Need?

You can’t just hire an AI engineer and call it done. You need:- Data engineers who know how to process unstructured data (PDFs, emails, videos)

- MLops experts to monitor model performance and catch drift

- Domain specialists (sales, HR, legal) to ensure context makes sense

- Compliance officers to map data use to regulations

What’s Next? The Future of Data Strategy

By 2026, 85% of enterprises will have formal data governance for AI. 67% will use decentralized architectures like data mesh-where each team owns its data product. Real-time streaming will replace batch reports. Non-technical users will explore data with AI assistants, not SQL queries. But the core won’t change: if you don’t control your data, your AI won’t control its output. Quality, access, and security aren’t technical boxes to check. They’re the foundation of trust.Frequently Asked Questions

Do I need a vector database for generative AI?

Yes, if you want accurate, context-aware responses. Vector databases store data as embeddings-numerical representations of meaning-so your AI can find relevant information based on intent, not keywords. Tools like Pinecone and Weaviate handle 10,000+ queries per second with under 500ms latency. Without them, your AI will struggle to pull the right data fast enough for real-time use cases.

Can I use public data instead of proprietary data?

Public data alone gives you generic answers. If you train a model only on Wikipedia or open-source code, it will sound smart but give you no competitive edge. The real value comes from combining public models with your proprietary data-customer logs, internal docs, product specs-using RAG pipelines. That’s how you get 65-80% accuracy on domain-specific tasks instead of 15-20%.

How do I stop my AI from making up facts?

Three things: clean data, RAG, and validation. First, remove duplicates and outdated info from your training set. Second, use Retrieval-Augmented Generation so the AI pulls answers only from trusted sources before responding. Third, validate 100% of data before training. MIT found this cut model errors by 58%. Hallucinations aren’t inevitable-they’re a symptom of bad data.

Is data strategy only for big companies?

No. Even small teams can start with one use case. Pick a high-impact, low-risk area-like internal FAQ automation or document summarization. Clean that one dataset. Build a simple RAG pipeline. Measure results. Scale from there. You don’t need $2 million to begin. You need clarity on what data you’re using and why.

What’s the biggest mistake companies make?

Starting with the AI instead of the data. Companies buy fancy LLMs, then plug in whatever data they have-messy, siloed, outdated. It’s like buying a Ferrari and filling it with pond water. The result? Poor performance, compliance risks, and wasted money. The best teams start by asking: "What data do we need to make this work?" Then they fix it before touching the model.

Eric Etienne

March 23, 2026 AT 14:13Bro this whole post is just a fancy way of saying "garbage in, garbage out" but with more buzzwords. Vector databases? RAG? Please. I've seen teams spend $2M on this and still get bots that say "your account number is 000-000-000" when the CRM has "000000000". It's not a data problem-it's a people problem. Nobody wants to clean up the mess.

Sandy Pan

March 24, 2026 AT 22:30It’s funny how we treat AI like a magic wand while ignoring the scaffolding beneath it. Data isn’t just input-it’s the soul of the system. When we dump unvetted customer transcripts into a model, we’re not building intelligence. We’re building a mirror that reflects every error, bias, and ghost in our systems. That 95% failure rate? It’s not about tech. It’s about humility. We refuse to admit our data is broken because admitting it means admitting we’ve been lazy. And that’s harder than writing a new prompt.

Kevin Hagerty

March 26, 2026 AT 01:38Oh wow a 2 million dollar data strategy wow what a deal i bet they also pay their engineers in gold coins and unicorn tears

Dylan Rodriquez

March 26, 2026 AT 06:59I’ve seen this play out in three different companies. The ones that succeeded didn’t start with fancy tools-they started with one team, one dataset, and one painful question: "What happens if this bot tells a customer they owe $10,000 because of a typo?" That fear? That’s the real driver. Clean data isn’t a technical task-it’s a cultural one. You need someone in HR who cares about customer pain points. Someone in legal who reads the fine print. Someone who stops saying "we’ll fix it later" and says "let’s fix it now." It’s not glamorous. But it works.

Amanda Ablan

March 27, 2026 AT 08:59Just wanted to add that even small teams can start simple. We used a free tool to clean our internal FAQ docs, turned them into embeddings, and hooked it up to a basic RAG pipeline. No vector DB, no $2M budget. Just 3 people, 2 weeks, and a shared spreadsheet. Accuracy jumped from 18% to 72%. The key? Start with something small that hurts. Fix that. Then build. You don’t need to boil the ocean.

Meredith Howard

March 29, 2026 AT 04:14While the technical architecture described is compelling one must consider the epistemological implications of relying on embeddings as proxies for meaning. The assumption that contextual retrieval replaces human judgment may be flawed. If data is fragmented across silos then the model is not learning truth-it is learning statistical approximations of institutional memory. This raises questions about accountability when the system fails. Who bears responsibility when the AI cites a document that was deleted but still exists in a latent vector space? The answer may lie not in better tools but in clearer governance structures

Yashwanth Gouravajjula

March 29, 2026 AT 06:27India too saw this. Teams thought AI would fix bad data. It didn’t. Clean one dataset first. Then scale.

Kevin Hagerty

March 30, 2026 AT 14:05Wow so the solution to your bad data is… more data? genius. next you'll tell me the cure for a broken leg is to tie it tighter