Tag: LLM attention bias

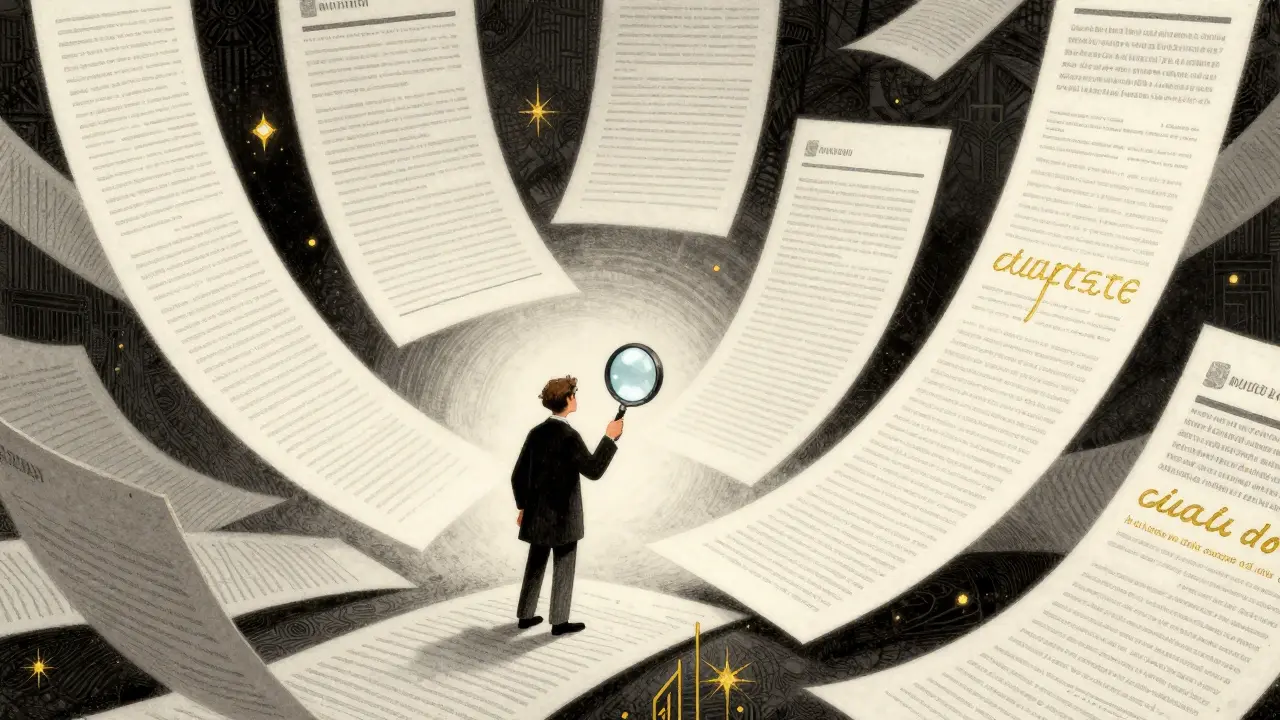

Long-Context Prompt Design: How to Position Information for LLM Attention

Learn how to optimize LLM performance by mastering long-context prompt design. Discover the "Lost in the Middle" phenomenon and strategies to position critical info for maximum attention.